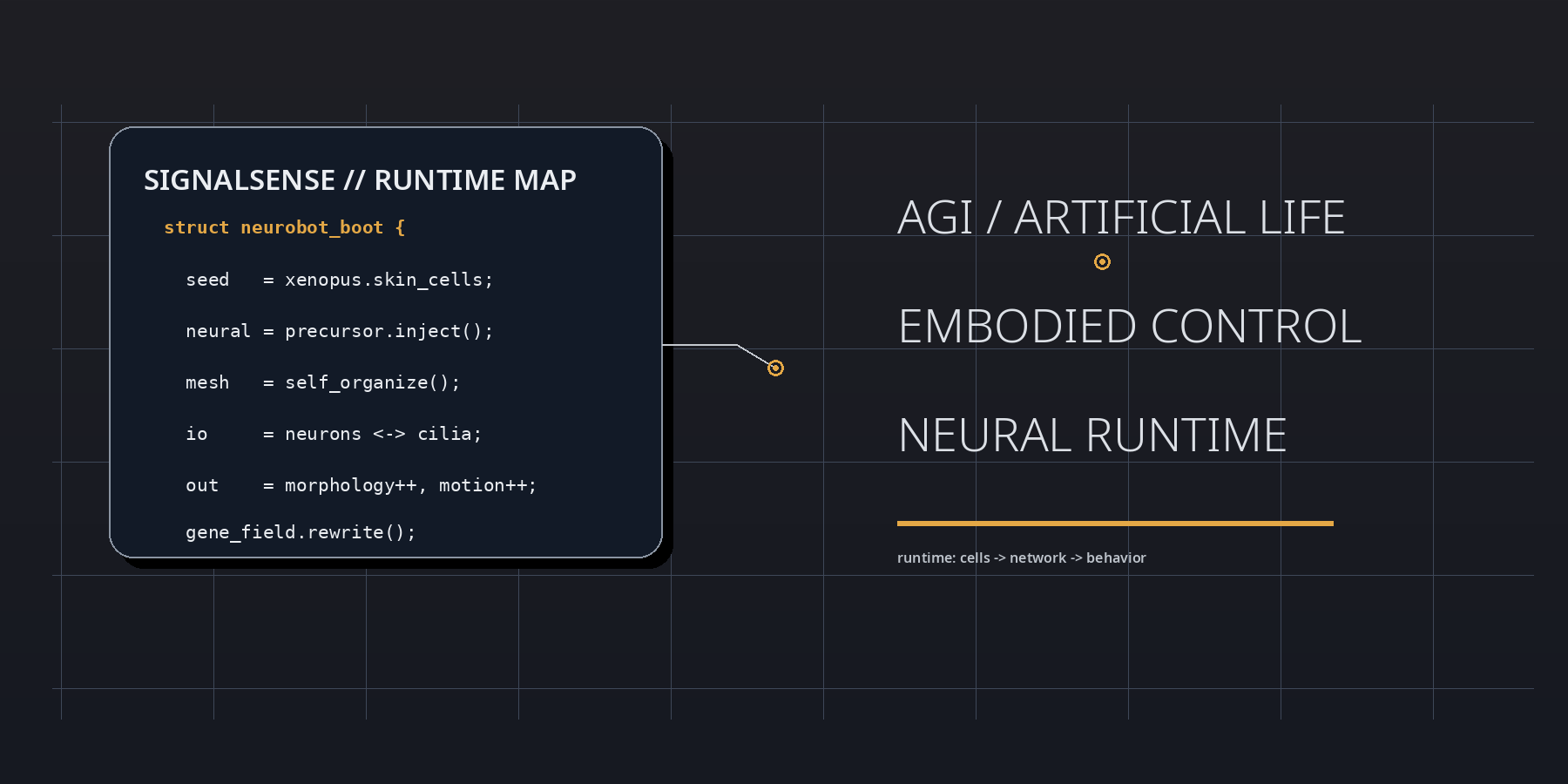

Neurobots as a Signal of Embodied AGI: A SignalSense analysis of self-organized nervous systems, morphogenetic control, and the next runtime for Artificial Life

Abstract

This report reads the frog-cell neurobot result as a control-architecture event. The essential signal is that a self-organized nervous layer can emerge inside an evolutionarily unfamiliar living body and alter its operating state. Morphology shifts, movement complexity increases, and gene activity is rewritten. For SignalSense, this is a frontier marker for the convergence of AGI, Artificial Life, and programmable biological runtimes.

Full Text

SIGNALSENSE ATELIER // FIELD REPORT Neurobots as a Signal of Embodied AGI

A SignalSense analysis of self-organized nervous systems, morphogenetic control, and the next runtime for Artificial Life

This report reads the frog-cell neurobot result as a control-architecture event. The essential signal is that a self-organized nervous layer can emerge inside an evolutionarily unfamiliar living body and alter its operating state. Morphology shifts, movement complexity increases, and gene activity is rewritten. For SignalSense, this is a frontier marker for the convergence of AGI, Artificial Life, and programmable biological runtimes.

March 2026

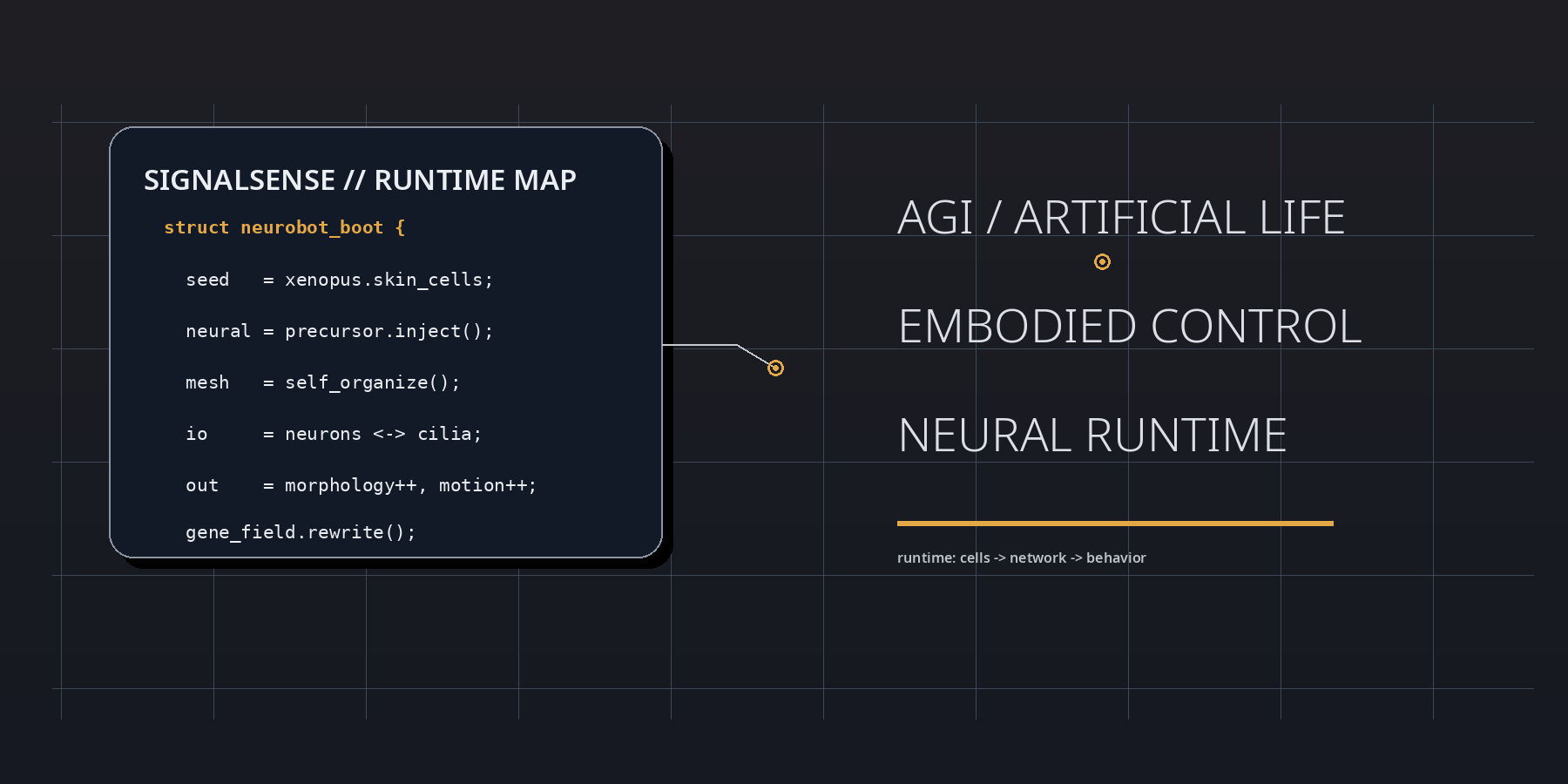

00 // EXECUTIVE SIGNAL

At boot, the neurobot result is best parsed as a runtime event inside living matter. Frog embryonic tissues were reassembled into motile biobots, neuronal precursors were injected during the healing window, and the implanted cells did not remain inert. They differentiated, connected, extended projections, and participated in a control layer that reshaped morphology, movement, and transcriptional state. In SignalSense terms, the experiment reveals that agency can be upgraded by modifying interface topology rather than by rewriting the genome line by line.

The strategic significance lies in the delta between substrate and behavior. The genome is not radically rewritten, yet the behavioral register moves. Shape elongates. Motility shifts. Spontaneous action becomes more complex. Global gene expression changes. This means that the operative variable is not merely code in the narrow genetic sense, but code plus placement, code plus geometry, code plus signal-routing context. The neurobot is therefore not just a biological robot; it is a proof that development can compile a fresh control stack when a living assembly is placed inside an unfamiliar architecture.

For AGI and Artificial Life, this matters because it weakens the old hardware-software split. A controller is not cleanly separable from a body. Morphology is not passive housing. The body, the network, and the environment co-author one another. SignalSense reads this as a frontier condition: the next intelligent systems may be less like static machines that execute commands and more like guided assemblies that grow capabilities through local feedback, adaptive coupling, and self-organized wiring.

01 // MORPHOGENETIC RUNTIME

A SignalSense reading begins with the proposition that living systems are not assembled like dead machines. They are negotiated into existence. The neurobot embodies this principle with unusual clarity. During the brief repair interval in which the original skin-derived construct is closing and stabilizing, neuronal precursors are inserted into the internal space. From there, a new network emerges without a historical blueprint fine-tuned by natural selection for that exact body plan. The result is not chaos, and it is not simple recapitulation of the frog embryo. It is a third regime: constrained novelty.

This is why the work deserves attention beyond developmental biology. A self-organized nervous system inside a noncanonical body is a live demonstration that biological matter contains a larger reachable state space than classical anatomy suggests. The organismal form that appears in nature is only one subset of what the genome can support under given field conditions. Rearrangement exposes latent options. SignalSense therefore treats neurobots as probes of possibility space. They are sensors for the hidden elasticity of multicellular intelligence.

The low-level lesson can be stated almost as pseudocode. Inject precursor cells. Permit healing. Allow local rules to propagate. Observe whether the network finds stable routes into action. The neurobot says yes. That matters because it shows that nervous organization is not exclusively a long-horizon evolutionary artifact. It is also a local solution produced by interaction rules, tissue constraints, and developmental gradients when the right raw materials are present.

SIGNAL FRAME // WHAT CHANGED The neural insertion did not merely add a new tissue type. It changed the operating grammar of the construct: morphology, motility, movement complexity, drug response, and gene-expression profiles were all perturbed. In engineering terms, the neurobot is a body whose firmware is partially written by developmental dynamics in real time.

02 // THE CONTROL STACK: BODY, CILIA, NEURONS, FIELD

The original biobot already contains a locomotor mechanism: multiciliated cells on the surface drive motion through coordinated beating. Goblet cells, ionocytes, and small secretory cells help maintain the surface chemistry and ionic regime required for motion. The neural insertion does not replace that machinery. Instead, it overlays a fresh coordination layer on top of it. The most interesting point is exactly this layered architecture: neurobots are not miniature vertebrates; they are hybrid control stacks in which a newly formed neural mesh couples into pre-existing epithelial actuators.

That is why the more elongated forms and more complex movement patterns are so informative. They indicate that the neurons are not decorative passengers. They appear to modulate, bias, or reorganize the ciliary field and associated surface-cell behavior. In systems language, the neurobot is a distributed actuator array receiving input from a self-grown internal network. This converts a simple motile sphere into a more nuanced agent with richer spontaneous trajectories and more variable behavioral signatures.

Such architectures are directly relevant to Artificial Life. Most A-Life platforms still privilege one of two models: either rigid robotics with explicit controllers, or simulated agents with abstract bodies. Neurobots point toward a third category, where the body itself remains soft, developmental, and partly self-defining. Control is not fully prewritten; it is cultivated. In that regime, design work shifts from specifying final form to specifying generative conditions.

03 // GENE ACTIVITY AS SYSTEM LOG

The transcriptomic shift is strategically decisive because it gives a molecular log of system reconfiguration. Once the nervous layer is integrated, the neurobot does not simply move differently; it also reads out differently at the level of gene expression. That means the inserted neural network is not an isolated module. It enters the cross-talk space of the entire construct. SignalSense interprets this as a distributed rewrite event. New wiring changes not only output behavior but the internal semantic map by which the living system stabilizes itself.

One particularly provocative observation is the upregulation of genes associated with visual- system development and visual processing. The current evidence does not justify any inflated claim that neurobots already possess vision in a mature or verified sense. Yet the signal remains important. It implies that once developmental trajectories are recontextualized, latent modules can become active outside their canonical anatomical setting. The body is scanning an enlarged solution space and activating machinery that classical taxonomy might not expect.

For AGI discourse, the analogy is immediate. Capability is often treated as if it were stored cleanly inside a central model. But the neurobot story suggests a different architecture: capability can emerge from the interaction between a control layer and a body whose interfaces are plastic.

In other words, some functions may not preexist as isolated modules waiting to be switched on; they may appear when a system crosses a topological threshold in coupling. This is a profound design clue for future hybrid agents.

LOW-LEVEL VIEW // EMBODIED COMPUTATION A neurobot can be read as an active data structure. Surface cells implement propulsion, secretion, and ionic regulation. Neural cells implement adaptive coupling. Morphology stores boundary conditions. Gene expression records state transitions. Behavior is the compiled trace of many parallel processes interacting across a living substrate.

04 // AGI × ARTIFICIAL LIFE: WHAT THIS PORTS FORWARD

SignalSense does not read neurobots as evidence of sentience, general intelligence, or anything close to a finished artificial organism. The correct read is more interesting and more operational. Neurobots indicate that living matter can be scaffolded into new classes of controller-bearing entities that exhibit self-organized coordination in bodies evolution did not optimize for that exact role. This extends the design horizon for AGI/Artificial-Life interfaces.

First, neurobots suggest that future intelligent systems may be grown as much as built. The designer will specify gradients, constraints, precursor distributions, and channel couplings, then let developmental rules perform part of the construction work. Second, the experiment implies that morphology and control must be co-engineered. A body that can change shape, alter surface-cell expression, and feed back into its own network is not peripheral to intelligence. It is part of the intelligence. Third, the result points toward sensorimotor fields in which biological tissues act as adaptive front ends for machine reasoning, or conversely, where machine models help guide the developmental search of living substrates.

This creates an unusual convergence zone. On one side sits AGI, with its scaling laws, world models, and planning frameworks. On the other sits Artificial Life, with developmental plasticity, self-repair, embodied adaptation, and open-ended morphogenesis. Neurobots sit near the handshake point. They do not collapse the two domains into one, but they make visible the bridge architecture: guided self-organization in living matter.

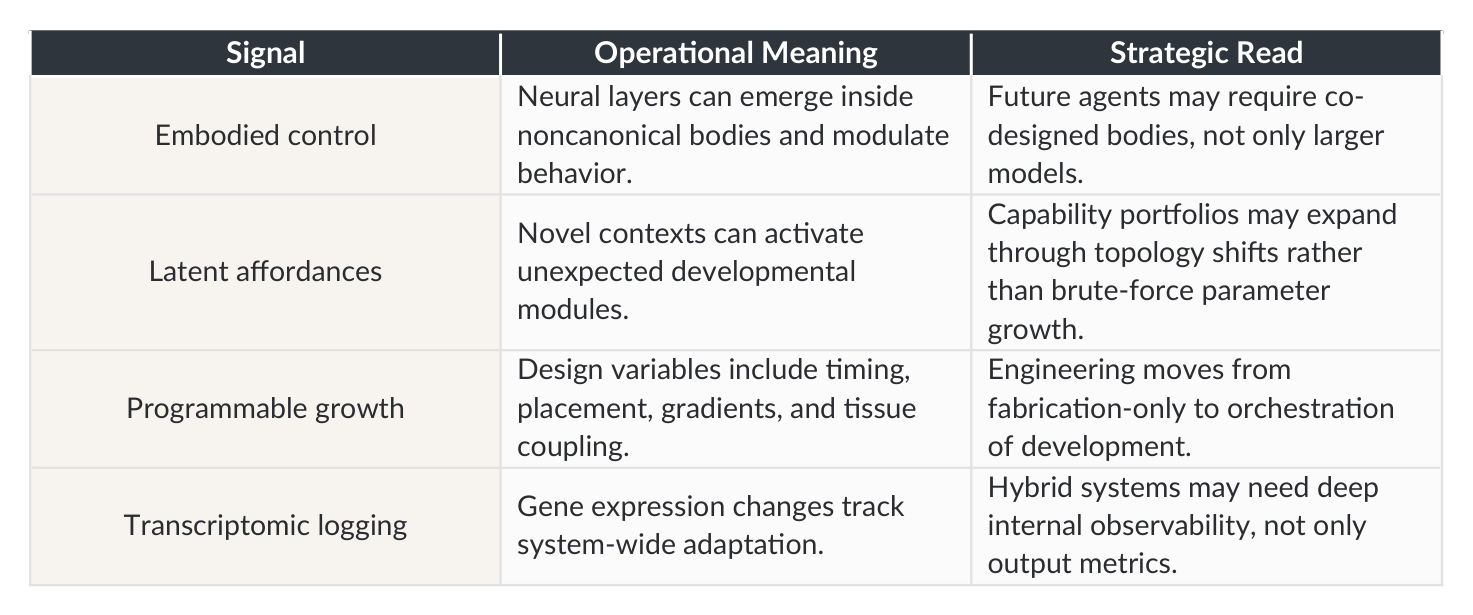

Signal Operational Meaning Strategic Read

Neural layers can emerge inside noncanonical bodies and modulate behavior.

Future agents may require co- designed bodies, not only larger models.

Embodied control

Capability portfolios may expand through topology shifts rather than brute-force parameter growth.

Novel contexts can activate unexpected developmental modules.

Latent affordances

Design variables include timing, placement, gradients, and tissue coupling.

Engineering moves from fabrication-only to orchestration of development.

Programmable growth

Hybrid systems may need deep internal observability, not only output metrics.

Transcriptomic logging Gene expression changes track system-wide adaptation.

05 // STRATEGIC MAP FOR SIGNALSENSE

From a SignalSense standpoint, the neurobot result belongs to a larger pattern. Across biology, robotics, and machine intelligence, the center of gravity is moving from static components to adaptive stacks. Sensors are no longer just sensors; they are active surfaces. Controllers are no longer only centralized brains; they are dynamic meshes. Bodies are no longer chassis; they are information-bearing fields. Neurobots compress this pattern into a small and vivid experimental object.

The immediate opportunity set spans regenerative medicine, programmable repair, and biologically native micromachines. Constructs made from patient-derived cells could eventually be tuned to carry signals, clear debris, modulate tissue niches, or deliver local interventions. Yet the deeper opportunity is epistemic. Neurobots provide a tractable platform for testing what kinds of behavior become reachable when new control layers are grown into living collectives. They are experimental sandboxes for discovering the grammar of multicellular agency.

For an atelier oriented toward geopolitics, frontier technology, and strategic foresight, the key issue is not only the lab result itself but the direction of travel. Once living constructs become easier to scaffold, observe, and guide, the question shifts from feasibility to governance. Which developmental pathways should be permitted? Which sensory competencies are acceptable? Which control handoffs between human operators, machine systems, and living assemblies are auditable? Neurobots make these policy questions arrive earlier than many institutions expect.

06 // RISK, GOVERNANCE, AND DESIGN DISCIPLINE

Any serious analysis must preserve design discipline. The current neurobots remain primitive, short-lived, and experimentally bounded. They are not autonomous organisms in the broad ecological sense, nor are they remotely equivalent to human neural systems. Strategic clarity requires respecting that scale. The wrong move is hype. The right move is architecture literacy.

Three governance principles follow. First, interpretability must evolve alongside capability. If living constructs begin to develop control layers that alter morphology and gene activity, researchers need observability at the levels of behavior, circuitry, and transcriptional state. Second, developmental interventions should be bounded by explicit task environments and containment logic. Third, applications should be staged through domains where benefit is concrete and auditable, especially repair, clearing, sensing, and local modulation in controlled settings.

For AGI-linked futures, a fourth principle becomes necessary: hybrid control accountability. If machine intelligence eventually participates in designing, tuning, or steering living agents, then the chain of authorship must remain legible. Who specified the precursor distribution? Who tuned the sensory threshold? Who approved the adaptive objective? In a world of scaffolded biological runtimes, governance must attach not just to final products but to generative protocols.

07 // FINAL COMMIT

The neurobot result should be read as a small but high-value signal. A novel nervous system can self-organize inside a synthetic living body, alter its morphology, perturb its motion, and rewrite gene activity. This is not a curiosity at the margins of biology. It is an existence proof that

developmental matter can assemble fresh control architectures when placed in an engineered context.

SignalSense therefore reads neurobots as a precursor technology for embodied intelligence. The durable lesson is that agency is not only encoded. It is compiled across tissue, topology, timing, and feedback. In low-level terms, the future will not be written solely as larger models running on harder silicon. Part of it will be grown as adaptive wetware whose control logic emerges inside the loop. Neurobots do not complete that future. They initialize it.

Selected Sources

Fotowat H. et al. Engineered Living Systems With Self-Organizing Neural Networks: From Anatomy to

Behavior and Gene Expression. Advanced Science, 2026. DOI: 10.1002/advs.202508967.

Wyss Institute at Harvard University. Toward autonomous self-organizing biological robots with a

nervous system. March 2026.

Tufts University. Scientists create novel organism with primitive nervous system. March 2026.

📝 About this HTML version

This HTML document was automatically generated from the PDF. Some formatting, figures, or mathematical notation may not be perfectly preserved. For the authoritative version, please refer to the PDF.