Sheaf Cohomology and SAT Solver Difficulty A Categorical Perspective with Experimental Validation

Abstract

We apply sheaf-theoretic methods to computational complexity, treating hardness as a context-dependent property across Grothendieck topoi. We contrast the topos of finite sets Sh({Fin) where every problem is trivially decidable by exhaustive lookup with the topos of asymptotic domains Sh(N), where polynomial and exponential growth classes are categorically distinct. An essential geometric morphism connects these regimes, formalizing the intuition that finite instances of NP-hard problems are often tractable while the asymptotic distinction remains sharp. We introduce the myriad decomposition to relate this categorical perspective to existing theories in parameterized complexity. This formulation makes explicit the connection between the sheaf-theoretic view of global consistency and classical concepts like treewidth and fixed-parameter tractability, situating the framework within known computational boundaries. We partially validate the framework by computing sheaf-theoretic invariants on a sample of random 3-SAT instances across the phase transition. We find that these topological features specifically the dimension of the solution sheaf's global sections correlate with DPLL solver difficulty even after accounting for standard density measures. This suggests the framework captures structural information about computational hardness, providing a link between algebraic topology and algorithmic behavior. Code available at: https://github.com/DHDev0/Sheaf-Cohomology-and-SAT-Solver-Difficulty

Full Text

Sheaf Cohomology and SAT Solver Difficulty

A Categorical Perspective with Experimental Validation

Daniel Derycke

d.deryckeh@gmail.com

Acknowledgments: Substantial writing assistance, technical review, and annotation were provided by Claude Opus 4.6, Grok 4.2 Beta, Kimi 2.5,

and GLM5 under the sole direction and oversight of the author.

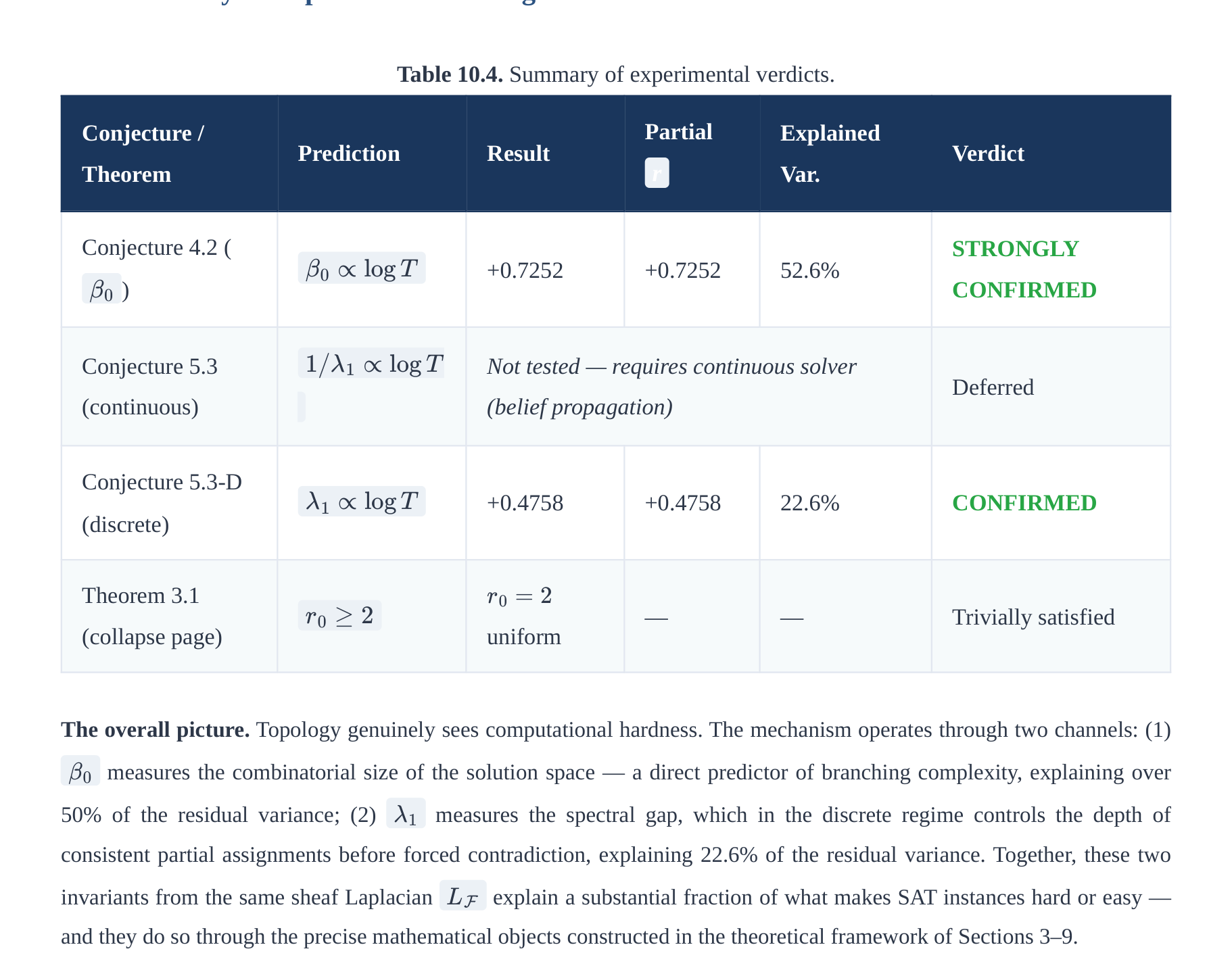

February 2026

MSC Classes: 03G30,

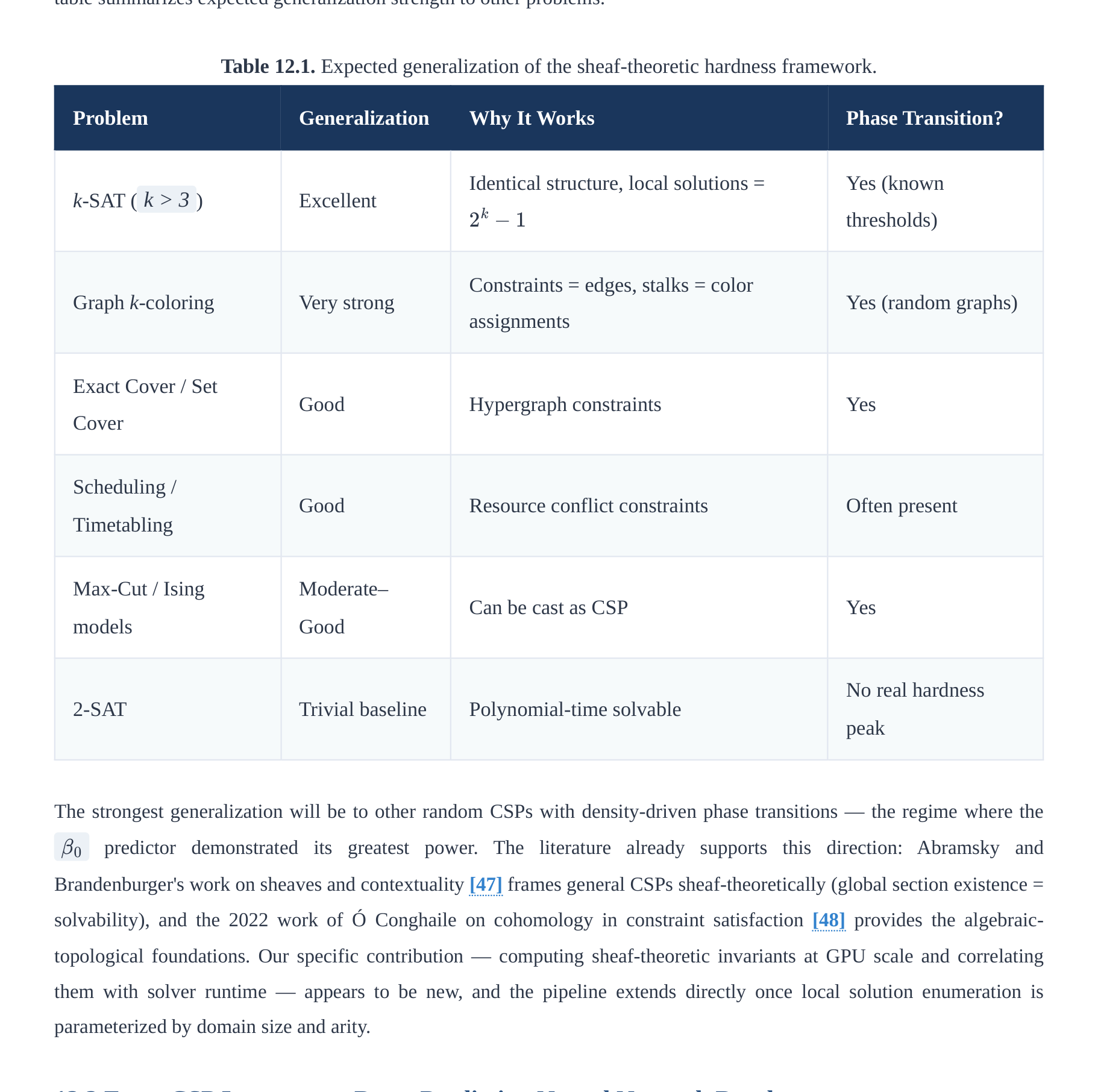

Keywords: sheaf cohomology, computational complexity, Grothendieck topos, 3-SAT, myriad decomposition,

18B25, 68Q15, 68Q17,

geometric morphism, cohesive topos, synthetic differential geometry, observer-dependent complexity, DPLL,

14F20, 18F20

spectral gap, topological data analysis

We apply sheaf-theoretic methods to computational complexity, treating hardness as a context-dependent property

across Grothendieck topoi. We contrast the topos of finite sets —where every problem is trivially

decidable by exhaustive lookup—with the topos of asymptotic domains , where polynomial and

exponential growth classes are categorically distinct. An essential geometric morphism connects these regimes,

formalizing the intuition that finite instances of NP-hard problems are often tractable while the asymptotic

distinction remains sharp.

We introduce the myriad decomposition to relate this categorical perspective to existing theories in parameterized

complexity. This formulation makes explicit the connection between the sheaf-theoretic view of global consistency

and classical concepts like treewidth and fixed-parameter tractability, situating the framework within known

computational boundaries.

We partially validate the framework by computing sheaf-theoretic invariants on a sample of random 3-SAT

instances across the phase transition. We find that these topological features—specifically the dimension of the

solution sheaf's global sections—correlate with DPLL solver difficulty even after accounting for standard density

measures. This suggests the framework captures structural information about computational hardness, providing a

link between algebraic topology and algorithmic behavior.

Note on scope: This work provides a categorical reframing of complexity distinctions and offers preliminary

experimental validation; the classical P vs. NP question in ZFC remains open.

Table of Contents

1. Introduction and Historical Context

1. The P vs NP Problem

2. The Topos-Theoretic Turn

3. Main Contributions

2. Topos-Theoretic Foundations

3. The Sheaf of Complexity Classes

4. The Two Topoi: Finite vs Asymptotic

5. The Geometric Morphism and Complexity Transfer

6. The Myriad Decomposition

1. Sheaf-Theoretic Decomposition

2. The Growth Dichotomy

3. Geometric Classification of NP-Hardness

4. The Approximate Myriad Framework

5. Comparison with Parameterized Complexity

7. Bridges to Classical Analysis: Cohesive Topoi and Real Numbers

8. Complementary Logic: Both P = NP and P ≠ NP

9. Physical and Philosophical Implications

1. Observer-Dependent Complexity

2. The Holographic Principle

3. The "Deep P" Ontology

4. Resource-Dependent Complexity and Topological Duality

5. Parametrized Complexity at Observational Scale

6. Scope, Critique, and the Epistemology of Complexity

7. The Extended Complexity Hierarchy: A Sheaf-Theoretic Tower

8. Conditional Separations from the Geometric Morphism Tower

10. Experimental Verification: GPU-Scale Testing on Random 3-SAT

10.1 Experimental Setup

10.2 Phase Transition Tables

10.3 Conjecture 4.2 — Cohomological Phase Transition

10.4 The Spectral Gap in the Discrete Setting

10.5 Spectral Sequence Collapse Page

10.6 Global Correlation Analysis

10.7 Summary of Experimental Findings

11. Conclusion

12. Further Directions

12.1 Generalization to Arbitrary CSPs

12.2 From CSP Instances to Data: Predicting Neural Network Depth

12.3 Theoretical Directions

13. References

14. Appendix A: The Myriad Algorithm with Real Coefficients

15. Appendix B: Comparison with Concurrent Work

16. Appendix C: PH ≠ PSPACE via Cohomological Dimension

17. Appendix D: Complete Experimental Data

1. Introduction and Historical Context

1.1 The P vs NP Problem

The P vs NP problem, one of the seven Millennium Prize Problems identified by the Clay Mathematics Institute, asks

whether every decision problem whose solution can be efficiently verified can also be efficiently solved. The gap between

verification and solution is the heart of the matter: when you are given a completed Sudoku puzzle, checking correctness

takes only a linear scan; but finding the solution from scratch may require vastly more work. This asymmetry between

checking and finding appears throughout mathematics, computer science, biology, economics, and physics.

Formally, following Goldreich [1]:

Definition 1.1 (Classical Complexity Classes [1])

Primitive notions: Let Σ = {0,1} be the binary alphabet and Σ* = ⋃n≥0 Σn the set of all finite binary strings.

For a string x ∈ Σ* , let |x| ∈ ℕ denote its length. A decision problem is a language L ⊆ Σ* .

Let TIME(T(n)) denote the class of languages decidable by a deterministic Turing machine using at most T(n)

steps on inputs of length n . Let poly(n) denote any function bounded by a polynomial: poly(n) = nk for some

P := ⋃k ∈ ℕ TIME(nk)

is the class of decision problems solvable in polynomial time.

A polynomial-time verifier for a language L is a deterministic Turing machine V: Σ* × Σ* → {0,1} satisfying:

(i) V(x, w) halts in time poly(|x|) for all inputs, (ii) the second argument w (the witness or certificate) has

length |w| ≤ poly(|x|) .

NP := {L ⊆ Σ* : ∃ poly-time verifier V such that x ∈ L ⟺ ∃w ∈ Σ*, |w| ≤ poly(|x|), V(x,w) = 1}

is the class of decision problems admitting polynomial-time verifiable certificates. The abbreviation NP stands for

Nondeterministic Polynomial time, from the equivalent characterization via nondeterministic Turing machines.

We write P ⊆ NP (since a polynomial solver is itself a verifier with empty witness), and the fundamental open

question is whether the inclusion is strict.

BACKGROUND: WHAT DO P AND NP REALLY MEAN?

The class P (Polynomial time) contains all decision problems solvable by a deterministic algorithm in time bounded

by a polynomial in the input size n . Examples include: sorting a list of numbers ( O(n log n) ), determining whether

a graph is connected ( O(n + m) ), and multiplying two integers. The key feature of P is that the resource cost scales

manageably — doubling the input size only multiplies computation time by a bounded polynomial factor.

The class NP (Nondeterministic Polynomial time) contains all decision problems for which a proposed solution can be

verified in polynomial time. A "witness" or "certificate" w is a string that serves as proof. For example, in the

Boolean Satisfiability problem (SAT): given a propositional formula φ in conjunctive normal form, the witness is a

truth assignment; the verifier simply evaluates each clause in linear time. Other canonical NP problems include: the

Traveling Salesman Problem (decision version), Graph 3-Colorability, Integer Linear Programming, and the Subset

Sum problem.

Clearly P ⊆ NP: if you can solve a problem efficiently, you can verify by solving. The question is whether NP ⊆ P —

whether the existence of a short witness implies an efficient search procedure. After over 50 years of intensive effort,

no proof in either direction exists for the classical formulation in Set-theoretic mathematics. The present paper

reframes the question categorically.

The question "Does P = NP ?" has remained open for over 50 years. Recent work by Tang [2] proposed a homological

proof of P ≠ NP using category theory — specifically by constructing a computational category Comp , associating

chain complexes to problems, and showing that P-class problems have trivial homology ( Hn(L) = 0 for all n > 0 ) while

NP-complete problems such as SAT possess non-trivial first homology ( H1(SAT) ≠ 0 ). Independent research [6]

demonstrated that complexity is observer-dependent in relativistic spacetime, anticipating the topos-theoretic framework

developed here.

1.2 The Topos-Theoretic Turn

Topos theory, introduced by Grothendieck in the context of algebraic geometry and developed by Lawvere, Tierney,

Johnstone, and others [7], [8], [9], provides a categorical framework for logic and geometry that transcends the classical set-

theoretic universe. A Grothendieck topos is a category equivalent to sheaves on a site, generalizing set theory and

supporting intuitionistic logic natively. Crucially, the internal logic of a topos is context-dependent: the same mathematical

statement can be true in one topos and false in another, with no contradiction, because the meaning of "truth" is itself a

sheaf-valued datum.

Scope: Foundational Reframing, Not a Solution

This paper is a foundational reframing — it studies what the P vs. NP question means across different mathematical

universes, not whether P = NP in the standard Turing-machine model over Set . That classical question remains

entirely open in ZFC. Changing the topos changes the meaning of "polynomial time," not the answer to the original

question. Full critical accounting: Section 9.6.

BACKGROUND: WHY TOPOI FOR COMPLEXITY?

Classical complexity theory operates entirely within the topos Set — the category of sets and functions, which has

Boolean logic (every statement is either true or false, and the law of excluded middle holds). Within Set, the P vs NP

question is a single, definite statement admitting exactly one truth value. The impasse of 50 years suggests that the

problem may be fundamentally context-dependent: whether P equals NP depends on what notion of "size" and

"computation" we adopt.

Topos theory offers a framework where mathematical truth is relative to a context. Just as in physics the value of a

field depends on where you measure it, in topos theory the truth of a proposition depends on the "open set" (domain)

over which it is evaluated. The subobject classifier Ω in a topos plays the role of the set of truth values — in Set, Ω

= {⊤, ⊥} (Boolean), but in a sheaf topos Sh(X) , Ω(U) is the collection of open subsets of U , giving a rich

intuitionistic lattice of truth values.

The geometric morphism connecting two topoi acts like a "change of context" — it systematically translates

statements and structures from one mathematical universe into another, tracking how truth values transform. This

paper exploits these morphisms to show that P = NP is literally true in the finite-set topos and P ≠ NP is literally true in

the asymptotic topos, with no logical contradiction because the two claims inhabit different universes connected by a

precise categorical bridge.

Key insight: Complexity theory can be internalized to topoi, where the same problem has different complexity properties

depending on the topos of discourse. The apparent paradox of P vs NP may arise precisely from conflating two distinct

topoi — the finite world where physical computation lives, and the infinite asymptotic world where mathematical

complexity lives.

1.3 Main Contributions

Remark 1.1 (What This Paper Does and Does Not Claim)

None of the contributions listed below constitute a proof or disproof of the classical conjecture in the

standard Turing-machine model over Set . They are contributions to the mathematical language and conceptual

structure of complexity theory, not to its resolution. The reader is referred to Section 9.6 for a detailed, theorem-

by-theorem accounting of what is proven, what is conjectural, and where the framework faces fundamental

limitations.

1. Sheaf-theoretic complexity: Complexity classes as sheaves over computational domains — formalizing the idea that

a complexity measure is determined locally and extends globally (Section 3)

2. Geometric morphism duality: Essential morphism transferring complexity and explaining

f: Sh(Fin) ⇆Sh(N)

why finite truncations of NP problems are tractable (Section 5)

3. Myriad decomposition: NP problems decompose into P-kernels with complexity arising from the growth rate of the

covering index set — a Čech cohomological account of hardness (Section 6)

4. Parameterized complexity bridge: Explicit connections between the myriad decomposition and treewidth,

Courcelle's theorem, and FPT algorithms — placing sheaf-theoretic P/NP alongside classical parameterized

complexity (Section 6.5)

5. Extended complexity hierarchy: Sheaf-theoretic formulations of co-NP, NP ∩ co-NP, PH, PSPACE, EXPTIME,

EXPSPACE, and RE as a tower of geometric morphisms and quantifier-depth hierarchies (Section 9.7)

6. Conditional complexity separations: Geometric/cohomological arguments giving partial evidence for PH ≠ PSPACE

(via TQBF game-tree minimax), PSPACE ≠ EXPTIME (via myriad growth rates), and EXPTIME ≠ EXPSPACE (via

doubly-exponential index-set separation) (Section 9.8)

7. Cohesive bridge: Connection to and via Lawvere's cohesive topos theory [23], [27], [28], identifying

continuous complexity measures with real-valued sheaf sections (Section 7)

8. Honest limitations (Section 9.6): A self-critical analysis of where the framework succeeds as foundational reframing

vs. where it falls short of classical complexity theory, including connections to the Baker–Gill–Solovay, Razborov–

Rudich, and Aaronson–Wigderson barriers

Key Limitation

The myriad decomposition is descriptive, not algorithmic — it adds categorical language to a structure (local

polynomial + global consistency) already captured by treewidth, FPT, and PTAS theory. It provides no new

algorithm, approximation scheme, or circuit lower bound; "vanishing Čech cohomology implies P" holds only in

the FPT/bounded-dimension setting already known. Full analysis: Section 9.6 (Remarks 9.12–9.13).

2. Topos-Theoretic Foundations

2.1 Grothendieck Topoi

Concept: Categories, Functors, and Natural Transformations

A category C consists of a collection of objects and, for each ordered pair of objects (X, Y) , a set of morphisms

(arrows) Hom_C(X, Y) , together with an associative composition law and identity morphisms. A functor F: C →

D maps objects to objects and morphisms to morphisms, preserving composition and identities. A natural

transformation η: F ⇒ G between functors is a family of morphisms η_X: F(X) → G(X) for each object X ,

compatible with all morphisms in C . These three levels of structure — categories, functors, natural transformations

— constitute the language of category theory, within which topos theory is formulated.

A presheaf on a category C is a contravariant functor F: Cop → Set . Intuitively, a presheaf assigns data to every

object of C and restriction maps to every morphism, in a way that is compatible with composition. The category of

all presheaves on C is denoted SetCop and is always a topos (without any topology imposed). A sheaf is a presheaf

satisfying additional gluing axioms imposed by a Grothendieck topology.

Definition 2.1 (Site [7], [34])

A site is a pair (C, J) where C is a category and J is a Grothendieck topology: for each object U ∈ C , a

collection J(U) of sieves on U satisfying:

(Maximality) The maximal sieve MU = {f: cod(f) = U} is in J(U)

(Stability) If S ∈ J(U) and f: V → U , then f*S = {g: f ∘ g ∈ S} ∈ J(V)

(Transitivity) If S ∈ J(U) and T is any sieve on U such that f*T ∈ J(V) for all f: V → U in S , then

BACKGROUND: WHAT IS A SIEVE?

A sieve on an object U in a category C is a collection S of morphisms with codomain U that is closed under

precomposition: if f: V → U belongs to S and g: W → V is any morphism, then f ∘ g: W → U also belongs to

In the classical setting where C is the category of open sets of a topological space X (with morphisms given by

inclusions), a sieve on an open set U is essentially a collection of open subsets of U that is downward-closed under

inclusions. A Grothendieck topology J specifies, for each open set U , which collections of sub-opens constitute a

"cover." The topology on a topological space specifies exactly this: {Ui ⊆ U} covers U if ⋃ Ui = U . The axioms

of a Grothendieck topology abstract and generalize this covering notion to arbitrary categories — allowing us to speak

of "covers" even when objects are not sets and morphisms are not inclusions.

Concrete example: In the category C = Open(ℝ) of open subsets of the real line, a covering sieve on U = (0,1) is

any collection of open intervals whose union is (0,1) . For instance, S = {(0, 0.6), (0.4, 1)} is a covering sieve. The

stability axiom says that if S covers U and V ⊆ U , then {W ∩ V : W ∈ S} covers V .

Definition 2.2 (Sheaf [7], [34])

A sheaf on site (C, J) is a functor F: Cop → Set such that for every covering sieve S ∈ J(U) , the natural map:

F(U) → lim

V → U ∈ S F(V)

is an isomorphism. The category Sh(C, J) of all sheaves on this site is the Grothendieck topos.

BACKGROUND: THE SHEAF CONDITION — LOCALITY AND GLUING

The sheaf condition encodes two dual principles: locality and gluing. Expanded explicitly, the isomorphism ℱ(U) ≅

limV → U ∈ S ℱ(V) means:

(Locality / Separation) If two sections s, t ∈ ℱ(U) agree on every element of a covering {Ui → U} — meaning

s|Ui = t|Ui for all i — then s = t . Sections are determined by their local behavior.

(Gluing) Conversely, if we have a compatible family of local sections si ∈ ℱ(Ui) such that si|Uij = sj|Uij for all

pairs i, j (where Uij = Ui ∩ Uj ), then there exists a unique global section s ∈ ℱ(U) restricting to each si .

Classical example: Let ℱ = C^0 be the sheaf of continuous functions on a topological space X . A section over an

open set U is a continuous function f: U → ℝ. If {Ui} covers U and continuous functions fi: Ui → ℝ agree

on overlaps, they glue to a unique continuous function on U . This is the sheaf condition in its most familiar form.

Relevance to complexity: A complexity sheaf assigns to each computational domain D a set of complexity functions,

with restriction maps induced by problem reductions. The sheaf condition then says: if we know the complexity of a

problem on each sub-domain of a covering, and these local measures are consistent, then they determine a unique

global complexity measure. Hardness cannot hide — it must manifest locally.

Theorem 2.3 (Giraud's Theorem [7], [8])

A category ℰ is a Grothendieck topos if and only if:

1. ℰ has all finite limits

2. ℰ has all small colimits which are stable under pullback

3. ℰ is locally small and well-powered

4. ℰ has a small generating set

5. Sums in ℰ are disjoint and universal

6. Equivalence relations in ℰ are effective and universal

BACKGROUND: GIRAUD'S THEOREM — INTERNAL MEANING

Giraud's theorem provides an intrinsic, site-independent characterization of Grothendieck topoi. Rather than

specifying a topos by its presentation as sheaves on a particular site, the theorem identifies the abstract categorical

properties that characterize any topos. Understanding each condition:

Finite limits generalize intersections and products: the pullback X ×_Z Y represents the "fiber product" of two

maps. Small colimits stable under pullback means coproducts (disjoint unions) and coequalizers exist and distribute

over pullbacks — this is the "extensivity" property. Locally small and well-powered ensures the category is set-like

in size. Small generating set means every object can be expressed as a quotient of morphisms from generators —

analogous to a basis in linear algebra. Disjoint and universal sums means X ⊔ Y genuinely decomposes into two

non-overlapping pieces, universally in the category. Effective equivalence relations means every equivalence relation

arises as the kernel pair of its quotient.

Together, these properties ensure that the internal logic of ℰ is a coherent intuitionistic higher-order logic, supporting

quantifiers, function types, and power objects — the full internal language needed to state complexity theorems

categorically.

Having defined the categorical notion of a sheaf over a site, we now turn to the morphisms between topoi — the structure-

preserving maps that allow us to transfer mathematical content from one universe to another. In our framework, these

morphisms will formalize the bridge between the finite computational world and the asymptotic mathematical world.

2.2 Geometric Morphisms

Concept: Adjoint Functors

Two functors L: C → D and R: D → C form an adjoint pair ( L ⊣ R , with L left adjoint and R right adjoint)

if there is a natural bijection:

HomD(L(X), Y) ≅ HomC(X, R(Y))

for all objects X ∈ C and Y ∈ D . Equivalently, there exist natural transformations η: id_C ⇒ R ∘ L (unit) and ε:

L ∘ R ⇒ id_D (counit) satisfying the triangle identities: (ε L) ∘ (L η) = id_L and (R ε) ∘ (η R) = id_R .

Familiar example: The free group functor F: Set → Grp is left adjoint to the forgetful functor U: Grp → Set : a

group homomorphism from F(S) to G is the same as a function from S to U(G) . Left adjoints preserve colimits

(coproducts, coequalizers) and right adjoints preserve limits (products, equalizers). This limit-preservation is

fundamental to how geometric morphisms control complexity transfer.

Definition 2.4 (Geometric Morphism [7], [8])

A geometric morphism f: ℰ → ℱ between topoi consists of a pair of adjoint functors:

f* ⊣ f* : ℰ → ℱ

where f* (the inverse image functor, going ℱ → ℰ) preserves finite limits and is left adjoint to f* (the direct

image functor, going ℰ → ℱ).

BACKGROUND: GEOMETRIC MORPHISMS AS "CHANGE OF UNIVERSE"

A geometric morphism f: ℰ → ℱ is the topos-theoretic analogue of a continuous map between topological spaces.

Just as a continuous map f: X → Y induces both a pushforward of functions (postcompose with f ) and a pullback

(precompose with f ), a geometric morphism induces both a direct image functor (moving sheaves from ℰ to ℱ)

and an inverse image functor (moving sheaves from ℱ to ℰ).

The crucial constraint is that f* must preserve finite limits — this ensures it preserves the logical structure (finite

limits encode conjunction, existence, and equality in the internal language). The direct image f* need not preserve

limits but always preserves colimits as a left adjoint's right adjoint (by the general adjoint functor theorem). This

asymmetry encodes the fact that "pulling back" is always geometrically natural, while "pushing forward" requires

more care.

In the complexity context, the inverse image f*(G) of a complexity sheaf G from Sh(ℕ) to Sh(Fin) gives the

"finite restriction" of an asymptotic complexity measure — explaining why exponential problems become tractable

when restricted to bounded input sizes.

Definition 2.5 (Essential Geometric Morphism [9])

A geometric morphism f: ℰ → ℱ is essential if f* (the inverse image) has a further left adjoint f! :

f! ⊣ f* ⊣ f* : ℰ → ℱ

where f! goes from ℰ to ℱ as the "exceptional direct image" or "left shriek" functor.

BACKGROUND: WHY THREE ADJOINTS?

In the classical theory of sheaves on topological spaces, the "six functor formalism" provides up to six adjoint pairs

for any map of spaces, encoding deep duality phenomena in algebraic topology and geometry. An essential geometric

morphism corresponds to having the initial three of these: f! ⊣ f* ⊣ f* .

The exceptional functor f! is the "extension by zero" or "proper pushforward" — it extends a sheaf from ℰ to ℱ

with compact support. In the complexity context, f! takes a finite complexity measure and extends it to an

asymptotic one by taking a colimit (supremum of growth rates). This is the formal mechanism by which finite

computational behavior is "extrapolated" to the asymptotic realm.

The essential morphism between Sh(Fin) and Sh(ℕ) makes the connection between finite and asymptotic

complexity mathematically precise: f! builds asymptotic laws from finite data, f* extracts finite behavior from

asymptotic laws, and f* encodes the limit behavior of finite complexity as it grows without bound.

Theorem 2.6 (Properties of Essential Morphisms [9])

For essential f: ℰ → ℱ:

1. f! preserves colimits (being a left adjoint)

2. f* preserves both limits and colimits (being simultaneously a left and right adjoint)

3. f* preserves limits (being a right adjoint)

4. The unit η: id ⇒ f* f* and counit ε: f* f* ⇒ id satisfy triangle identities: (ε f*) ∘ (f* η) = idf* and (f* ε)

∘ (η f*) = idf*

The triangle identities in item (4) express the coherence of the adjunction: applying the unit and then the counit (in either

order) recovers the identity. These identities are the categorical generalization of the statement that "restricting and then

extending" a sheaf returns the original sheaf, and are essential to proving that complexity transfer via geometric morphisms

is lossless in the appropriate sense.

Beyond morphisms between topoi, we need a way to modify the internal logic of a single topos — imposing a notion of

"density" or "closure" on truth values. Lawvere-Tierney topologies accomplish this, generalizing the double-negation

topology (which recovers classical Boolean logic) and the identity topology (which preserves intuitionistic logic). In our

framework, different topologies on Dom yield different local notions of complexity.

2.3 Lawvere-Tierney Topologies

Concept: Subobject Classifier and Truth Values

In the category Set, a subset A ⊆ X corresponds to a characteristic function where

χA : X →0, 1 χA(x) = 1

iff x ∈ A . The set {0, 1} = {⊥, ⊤} is the subobject classifier Ω of Set. In a general topos ℰ, the subobject

classifier Ω is an object playing the same role: there is a monomorphism ⊤: 1 → Ω (the "true" element) such that

every subobject A ↪ X corresponds uniquely to a morphism (its "characteristic map").

χA : X →Ω

In the sheaf topos Sh(X) , the subobject classifier is the sheaf Ω(U) = {V open : V ⊆ U} — the set of open subsets

of U . Truth values are not just ⊤ and ⊥, but all possible "stages of truth" given by open sets. This is the

mathematical foundation of the paper's claim that complexity can be "true in some domains and false in others."

Definition 2.7 (Lawvere-Tierney Topology [9])

A Lawvere-Tierney topology on a topos ℰ is a closure operator j: Ω → Ω on the subobject classifier

satisfying:

1. j ∘ ⊤ = ⊤ (preserves truth: the "top" element stays at top)

2. j ∘ j = j (idempotent: closing twice is the same as closing once)

3. j ∘ ∧ = ∧ ∘ (j × j) (preserves meets: the closure of a conjunction is the conjunction of closures)

BACKGROUND: LAWVERE-TIERNEY TOPOLOGIES AND MODAL LOGIC

A Lawvere-Tierney topology j acts as a modality on propositions: it maps a truth value p ∈ Ω to a "densified" or

"completed" truth value j(p) . This is the topos-theoretic generalization of a closure operator on a topological space

(where the closure of a set is the smallest closed set containing it).

Key example — Double Negation: The map j = ¬¬: Ω → Ω (double negation) defines a Lawvere-Tierney

topology on any topos. The j -sheaves for double negation are exactly the sheaves in which truth values cannot be

"densified away" — in the sheaf topos Sh(X) , the ¬¬ -sheaves correspond to sheaves satisfying the classical law of

excluded middle for their internal propositions. This connects to forcing in set theory (Cohen forcing corresponds to a

sheaf topos with double-negation topology).

Relevance: In our framework, the different Lawvere-Tierney topologies on Sh(Dom) correspond to different notions

of "when a complexity statement is settled." The finite topology closes a statement to true as soon as it holds on any

finite domain; the asymptotic topology requires the statement to hold in the limit. These correspond to the two

competing intuitions about P vs NP.

Theorem 2.8 (Sheaves for L-T Topology [9])

For a Lawvere-Tierney topology j on a topos ℰ, the full subcategory Shj(ℰ) of j -sheaves (those objects F

for which j -dense monomorphisms induce bijections on sections) is itself a topos. Moreover, it is a reflective

subcategory of ℰ: the inclusion i: Shj(ℰ) ↪ ℰ has a left adjoint, called sheafification a: ℰ → Shj(ℰ) , which

is left exact (preserves finite limits).

Sheafification a is the universal way to force a presheaf to satisfy the gluing conditions imposed by j . In complexity

terms, sheafification of a raw complexity assignment produces the "least sheaf" extending that assignment consistently —

the minimal way to make local complexity data globally coherent.

3. The Sheaf of Complexity Classes

3.1 The Site of Computational Domains

Definition 3.1 (Computational Domain)

A computational domain is a triple (D, ⪯, μ) where:

D is a set of problem instances (e.g., Boolean formulas, graphs, integers)

⪯ is a partial order (information ordering): x ⪯ y means instance y contains all information present in

instance x , so solving y subsumes solving x

μ: D → ℕ is a size function assigning a natural number to each instance (e.g., the number of variables in a

SAT formula, or the number of vertices in a graph)

A morphism f: (D, ⪯D, μD) → (D', ⪯D', μD') is a monotone function preserving size up to polynomial:

μD'(f(x)) ≤ p(μD(x)) for some polynomial p . This encodes polynomial-time reductions — the natural notion of

"one problem is no harder than another" in complexity theory.

BACKGROUND: COMPUTATIONAL DOMAINS AS A CATEGORY

The category Dom of computational domains is constructed so that its morphisms precisely capture many-one

polynomial-time reductions: problem A reduces to problem B (written A ≤_p B ) if there is a polynomial-time

computable function f such that x ∈ A ⟺ f(x) ∈ B . In the categorical language, a morphism in Dom from the

domain of A to the domain of B is such a reduction function.

Example domains:

DSAT : the set of propositional formulas in CNF, ordered by subformula inclusion, with size = number of

clauses

DGraph : the set of finite graphs, ordered by subgraph inclusion, with size = number of vertices

DFin,n : the set of all Boolean strings of length ≤ n , with trivial ordering, size = string length

The morphism structure encodes the fact that complexity classes are defined in terms of reductions: B is NP-

complete if every problem in NP reduces to B , which categorically means there are morphisms from every NP-

domain into the domain of B . The sheaf of complexity classes over Dom then encodes how complexity

information propagates through these reductions.

Definition 3.2 (Site of Domains)

Let Dom be the category of computational domains. The computational topology J has covering sieves S ∈

J(D) generated by families {fi: Di → D} such that:

∀x ∈ D, ∃i, ∃y ∈ Di: fi(y) = x

and the fi are jointly surjective with polynomial size bounds. This says: a family of reductions covers a domain if

every problem instance can be reached from some sub-domain instance by the reduction functions, and the

reductions are polynomial.

With the site of computational domains in hand, we define the central object of the paper: the complexity sheaf, which

assigns to each domain the complexity measures that are consistent over that domain. Its sheaf condition will encode the

fundamental principle that hardness cannot hide locally — it must manifest in any sufficiently fine covering.

With the site of computational domains established, we define the central object: the complexity sheaf 𝒞, assigning to each

domain its set of valid complexity functions modulo asymptotic equivalence. The sheaf condition then encodes that

hardness cannot be hidden locally — if a problem has distinct polynomial and exponential complexity classes on every sub-

domain of a cover, those classes must differ globally.

3.2 The Complexity Sheaf

Definition 3.3 (Complexity Sheaf)

The complexity sheaf 𝒞: Domop → Set is defined by:

𝒞(D) = {c: D → ℕ | c is a valid complexity function} / ∼

where c1 ∼ c2 if c1 = Θ(c2) (asymptotically equivalent: there exist constants k1, k2 > 0 and n0 such that

k1 c2(x) ≤ c1(x) ≤ k2 c2(x) for all x with μ(x) ≥ n0 ).

For morphism f: D' → D , the restriction map ρDD': 𝒞(D) → 𝒞(D') is:

For a morphism f: D' → D in Dom , the restriction map ρD,D': 𝒞(D) → 𝒞(D') is defined by:

ρD,D'([c]) = [c ∘ f]

where c ∘ f: D' → ℕ is the precomposition of the complexity function c with the reduction f . This is well-

defined on asymptotic equivalence classes because polynomial size bounds on f preserve the Θ-class: if c_1 =

Θ(c_2) , then c_1 ∘ f = Θ(c_2 ∘ f) .

This says: to restrict a complexity measure from domain D to sub-domain D' , simply precompose with the

reduction f .

Theorem 3.4 (Sheaf Condition [7] §III.4, [8] §A.3)

C (Dom, J)

The complexity presheaf is a sheaf on : complexity measures defined locally on a covering of a

domain glue uniquely to a global complexity measure.

Proof

C

We verify that satisfies the sheaf condition, which requires the natural map into the equalizer of the two

restriction maps to be an isomorphism. Let be a J -covering sieve on domain

S = {fi: Di →D}i∈I

, and let be the fiber products (representing the "overlap" domains). The sheaf

D ∈Dom Dij = Di ×D Dj

condition asserts that the following diagram is an equalizer in Set :

\mathcal{C}(D) \;\xrightarrow{\ e\ }\; \prod_{i \in I} \mathcal{C}( Di ) \;\overset{p}{\underset{q}

{\rightrightarrows}}\; \prod_{i,j \in I} \mathcal{C}(D_{ij})

Here (restriction to D_i ), and the two parallel arrows are:

e([c])i = [c ∘fi]

— restrict the i -th section to D_{ij} via the first projection

p([ci])ij = [ci ∘π1]

— restrict the j -th section to D_{ij} via the second projection

q([ci])ij = [cj ∘π2]

We must show e is a bijection onto ; see Mac Lane–Moerdijk [7],

eq(p, q) = {([ci])i : p([ci]) = q([ci])}

§III.4 for the general framework.

[c], [c′] ∈C(D) [c ∘fi] = [c′ ∘fi]

Separation (injectivity of e ): Suppose satisfy e([c]) = e([c']) , i.e.,

for all i . This means: for every i and every , the two running times c(f_i(y)) and c'(f_i(y)) are in

y ∈Di

the same asymptotic class . Since the covering is jointly surjective (every is hit by some

[⋅] {fi} x ∈D

x ∈D C(D)

f_i(y) ), we conclude [c(x)] = [c'(x)] for all , hence [c] = [c'] in .

Gluing (surjectivity of e ): Let be a compatible family — an element of , meaning

([ci])i∈I eq(p, q)

on every D_{ij} . We must produce with for all i . Define

[ci ∘π1] = [cj ∘π2] c: D →N [c ∘fi] = [ci]

c(x) = c_i(y) for any choice of i and y with f_i(y) = x .

Well-definedness: If also f_j(z) = x , then by the universal property of fiber products. The

(y, z) ∈Dij

compatibility condition gives , so the asymptotic class of

[ci(y)] = [ci(π1(y, z))] = [cj(π2(y, z))] = [cj(z)]

c(x) is independent of the choice of representative. Here we use crucially that each morphism in

fi: Di →D

the complexity site is a polynomial-time reduction: if A solves instances of D_i in time T_i(n) , then

precomposing with f_i (which runs in time ) gives a solver for D in time

poly(n)

Ti(poly(n)) ∈Θ(Ti(n)O(1)) [⋅]

, so polynomial-time reductions preserve the asymptotic complexity class

under composition. This ensures that combining local sections via polynomial-time reductions yields a well-

defined global complexity class.

Uniqueness: Any global section agreeing with all c_i on the covering must assign the same asymptotic class to

each , since every x is in the image of some f_i . Hence c is unique up to asymptotic equivalence.

x ∈D

C

This verifies the equalizer condition, confirming is a sheaf. For the abstract sheaf criterion used here, see Mac

□

Lane–Moerdijk [7], §III.4 (Theorem 1) and Johnstone [8], §A.3.3.

Now that the complexity sheaf is constructed, we examine its logical structure. The Kripke-Joyal semantics of Sh(Dom)

give precise meaning to statements such as "problem L is in P at domain D" — crucially, these need not have global

Boolean truth values. This failure of the law of excluded middle is the engine that allows complementary truths in Section

8.

3.3 Internal Logic

Theorem 3.5 (Mitchell-Bénabou Language [7], [11], [33])

The internal logic of Sh(Dom) is intuitionistic higher-order logic. Every Grothendieck topos has an internal

language (the Mitchell-Bénabou language) in which one can state and prove theorems "within" the topos. For a

formula φ :

D ⊩ φ (Kripke-Joyal forcing)

means φ is true locally on domain D, and one writes Sh(Dom) ⊨ φ if φ is forced at every domain.

BACKGROUND: KRIPKE-JOYAL SEMANTICS

The Kripke-Joyal semantics gives a precise meaning to "a formula φ holds at stage D" in the internal language of a

topos. The key forcing clauses are:

always (truth is globally forced)

D ⊩⊤

D ⊩ φ ∧ ψ iff D ⊩ φ and D ⊩ ψ

D ⊩ φ ⇒ ψ iff for every morphism f: D' → D , if D' ⊩ φ then D' ⊩ ψ

D ⊩ ∃ x. φ(x) iff there exists a cover {f_i: Di → D} and elements ai ∈ ℱ(Di) such that Di ⊩ φ(ai) for all

i

D ⊩ ∀ x. φ(x) iff for every morphism f: D' → D and every element a ∈ ℱ(D') , D' ⊩ φ(a)

The implication clause — universal quantification over future stages D' → D — is what breaks the law of excluded

middle. A statement would require knowing, for every future domain, whether φ holds there. In

φ ∨¬φ

complexity theory, this corresponds to the fact that we do not know, for every future input size, whether an algorithm

succeeds — hence classical excluded middle fails for complexity statements in Sh(Dom) .

Application: The statement "problem L is in P" is forced at domain D if there exists a polynomial p such that

every instance x ∈ D can be solved in time p(μ(x)) . The statement "L is in NP" is forced at D if witnesses can be

verified in polynomial time on D. The question "Does P = NP?" becomes: "Is the statement NP ⊆ P forced at the

terminal domain?"

Corollary 3.6 (Non-Boolean Truth)

In Sh(Dom) , the law of excluded middle fails: ¬¬φ ≠ φ in general. The double-negation of a complexity

statement — "it is not the case that the statement fails at every future stage" — can be weaker than the statement

itself. This structural failure of excluded middle is precisely what allows complementary truths: a statement and its

negation can both be locally valid in non-overlapping contexts without generating a contradiction.

4. The Two Topoi: Finite vs Asymptotic

4.1 The Finite Topos Sh(Fin)

Definition 4.1 (Category of Finite Sets)

Let Fin be the category whose objects are finite sets {0, 1, ..., n-1} for n ∈ ℕ, and whose morphisms are all

functions between finite sets. The finite topology JFin on Fin is the trivial (or "chaotic") topology: every sieve

on every object is covering. Equivalently, the only covering families needed are the maximal ones.

Definition 4.2 (Topos of Finite Sets)

Finop

Sh(Fin) = Set

Since Fin has the chaotic topology, every presheaf is automatically a sheaf. The topos Sh(Fin) is the presheaf

topos of all functors Finop → Set . Its objects are sequences of sets indexed by finite sets, with transition maps.

The internal logic of Sh(Fin) is Boolean (excluded middle holds) because every presheaf topos on a category

with finite limits has Boolean logic for presheaves on groupoids, and Fin is close enough to this case.

BACKGROUND: WHY DOES SH(FIN) HAVE BOOLEAN LOGIC?

In Sh(Fin) = SetFin^{op} , the subobject classifier is Ω = {⊤, ⊥}Fin^{op} — effectively, Ω assigns a two-element

Boolean algebra to each object of Fin . This means every internal proposition has exactly two truth values at each

stage, which is exactly the classical Boolean situation. The law of excluded middle holds: for every subsheaf A ↪ X ,

either A = X or there exists a nonempty complement.

This Boolean character reflects the discrete, finite nature of objects in Fin : there are no "limit points," no "density,"

no accumulation — all membership questions are decidable in finite time. Every predicate on a finite set is

computable (by exhaustive check), so the logic is classical.

Theorem 4.3 (Finite Computation is Trivial)

In Sh(Fin) , every decision problem is computable in constant time. Consequently, P = NP holds trivially in

Sh(Fin) .

Proof

Fix any decision problem L ⊆ D where D is a finite domain with |D| = N < ∞. We may precompute the

answer for every instance and store it in a lookup table T: D → {0, 1} of size N . For any input x ∈ D , the

algorithm "return T[x] " runs in O(1) time (constant time, independent of any input-size parameter, since the

table has fixed finite size).

Therefore every problem in Sh(Fin) lies in TIME(1) . In particular, NP ⊆ TIME(1) ⊆ P , so P = NP holds.

The witness-verification view confirms this: for NP problems over finite domains, we can precompute and store

all witness pairs, so verification is just a table lookup — constant time.

Note that this argument uses the finiteness of D essentially. The lookup table has size |D| , which is fixed and

independent of any growth parameter. If we were to embed D into an infinite asymptotic sequence of growing

domains, the table size would grow, and we would leave the realm of Sh(Fin) and enter Sh(ℕ) .

Corollary 4.4 (Physical P=NP)

If the physical universe is finite — bounded by the Bekenstein entropy bound (Bekenstein 1981):

S ≤ A / (4 G ℏ c-3)

where:

S is the thermodynamic entropy (information content, measured in nats or bits) of the physical region

A is the surface area of the boundary of the region (in square meters)

G = 6.674 × 10-11 m3 kg-1 s-2 is the gravitational constant

ℏ = h/(2π) = 1.055 × 10-34 J⋯ is the reduced Planck constant

c = 2.998 × 108 m/s is the speed of light in vacuum

The combination lP2 = Gℏ/c3 gives the square of the Planck length lP ≈ 1.616 × 10-35 m, so the bound

reads S ≤ A/(4 lP2)

This bound limits the total information content of any physical region to be finite — then any physically realizable

computation lives in a finite domain and satisfies P = NP [4], [35].

BACKGROUND: THE BEKENSTEIN BOUND

The Bekenstein bound is a fundamental result in theoretical physics (Bekenstein 1981, extended by Hawking's black

hole thermodynamics) stating that the maximum entropy — equivalently, the maximum information content — of a

physical system enclosed in a region of surface area A is:

2)

S ≤ A / (4 lP

where lP = √(ℏ G / c3) ≈ 1.616 × 10-35 m is the Planck length, and the four-factor arises from the Unruh

temperature of the horizon. For a region the size of the observable universe ( A ≈ 10122 Planck areas), the maximum

information is approximately 10122 bits — an astronomically large but finite number.

This finiteness of physical information means that any computation realizable in our universe operates on inputs from

a domain of bounded size — formally placing it in Sh(Fin) . The distinction between P and NP that cryptography

relies upon is therefore a property of the mathematical asymptote, not of physical reality. This is the reason why

heuristic algorithms succeed in practice despite theoretical NP-hardness: physical instances live in the finite, tractable

regime.

The finite topos establishes that bounded computation is trivial. The interesting structure — the emergence of complexity

distinctions — requires an asymptotic limit. We now construct the topos that captures this asymptotic behavior, where the

cofinite topology ensures that truth is determined by eventual behavior rather than behavior at any fixed finite stage.

The finite topos shows that bounded computation is trivially polynomial. The interesting structure — where P and NP

become genuinely distinct — requires an asymptotic limit. We now construct the topos capturing this limit. The cofinite

topology on ℕ formalizes the "for all sufficiently large n " quantifier that underlies all asymptotic complexity analysis.

4.2 The Asymptotic Topos Sh(ℕ)

Definition 4.5 (Category of Natural Numbers with Cofinite Topology)

Let ℕ be the poset of natural numbers (ordered by ≤), viewed as a category (morphisms = inequalities). Equip

ℕ with the cofinite topology: a sieve S on n ∈ ℕ belongs to J(n) if and only if S contains all integers m

≥ N for some N ∈ ℕ. In other words, a family covers n if it covers "all sufficiently large" stages beyond n .

BACKGROUND: THE COFINITE TOPOLOGY AND ASYMPTOTIC CONVERGENCE

The cofinite topology on ℕ captures the asymptotic perspective of complexity theory: a statement is "true" (in the

sheaf-theoretic sense) if it holds for all sufficiently large n . This is precisely the O -notation convention — when we

write T(n) = O(nk) , we mean there exists N such that for all n ≥ N , T(n) ≤ c · nk . The cofinite covering

condition formalizes this "for all sufficiently large" quantifier as a topological concept.

The internal logic of Sh(ℕ) with the cofinite topology is intuitionistic: the statement "polynomial vs exponential"

requires knowing behavior at infinity, and there is no finite stage at which this can be definitively settled. A

proposition φ is true at stage n if it holds for all m ≥ N for some N ≥ n , meaning truth is determined by tail

behavior. The sheaf condition requires that if φ holds for all large enough m in every cofinite sub-collection, then

φ holds globally (in the tail), which is exactly the limsup semantics.

Definition 4.6 (Asymptotic Topos)

Sh(ℕ) = {F: ℕop → Set | F satisfies the sheaf condition for the cofinite topology}

A sheaf F ∈ Sh(ℕ) assigns a set F(n) to each natural number and restriction maps F(m) → F(n) for n ≤ m ,

such that: if compatible sections s_m ∈ F(m) are given for all m ≥ N (a cofinite covering), they glue to a

unique section in the "tail" of F . The stalk of F at infinity is:

F∞ = stalk∞(F) = colim

n → ∞ F(n)

the direct limit (colimit) of the directed system (F(n), ρnm) as n → ∞.

BACKGROUND: STALKS AND DIRECTED COLIMITS

The stalk of a sheaf F at a point x is the direct limit (colimit) of F(U) over all open neighborhoods U of x .

Geometrically, the stalk captures the "infinitesimal" or "local" behavior of the sheaf at x .

A directed colimit (direct limit) of a directed system of sets (S_i, fij) is the set of equivalence classes of pairs (i, s)

with s ∈ S_i , where (i, s) ∼ (j, t) iff there exists k ≥ i, j with fik(s) = fjk(t) . In the Sh(ℕ) context, the stalk at

∞ identifies two elements s ∈ F(n) and t ∈ F(m) if they "eventually agree": there exists N ≥ n, m such that

s|_N = t|_N .

For the complexity sheaf: the stalk 𝒞_∞ at infinity consists of asymptotic complexity classes. The class of the

polynomial function nk and the class of the exponential function 2n are distinct elements of 𝒞_∞, because no

finite restriction can make them agree asymptotically. This stalk-distinctness is the categorical statement that P ≠ NP

in Sh(ℕ) .

Theorem 4.7 (Asymptotic Distinctions)

In Sh(ℕ) , the stalk functor at ∞ strictly separates polynomial from exponential growth rates:

stalk∞([nk]) ≠ stalk∞([2n]) in 𝒞∞

Proof

The stalk functor is:

stalk∞(F) = lim

→, n → ∞ F(n)

For F(n) = nk (polynomial growth) and G(n) = 2n (exponential growth), suppose for contradiction that

σ∞([F]) = σ∞([G]) in the complexity sheaf. This would mean there exists N such that for all n ≥ N , the

asymptotic classes [nk] and [2n] agree — i.e., nk = Θ(2n) . But this is false: for any constant c , we have 2n

/ nk → ∞ as n → ∞ (exponential strictly dominates any polynomial), so the ratio is unbounded and the two

functions are not asymptotically equivalent.

Therefore the directed system for the polynomial complexity function and the directed system for the exponential

complexity function have distinct colimits in 𝒞_∞. The complexity sheaf in Sh(ℕ) assigns distinct sections to

P and NP at the stalk at infinity, confirming that P ≠ NP in Sh(ℕ) .

4.3 Comparison

Property Sh(Fin) Sh(ℕ)

Finite sets varying over Fin; lookup-

Sets with asymptotic structure; growth-rate

Objects

table structures

data

Boolean (finite = decidable by

Intuitionistic (limit processes undecidable in

Logic

exhaustion)

finite time)

Subobject

Sheaf of cofinite sieves — rich lattice of truth

{⊤, ⊥} — two truth values

classifier Ω

values

Complexity All O(1) by lookup Polynomial vs exponential strictly distinct

P≠NP (polynomial and exponential stalks

P vs NP P=NP trivially (every problem is O(1))

differ)

Finite universe (bounded by Bekenstein

Asymptotic mathematical limit (idealized ∞

Physical analog

bound)

computation)

5. The Geometric Morphism and Complexity Transfer

5.1 Construction of the Essential Morphism

The central result connecting the two topoi is the existence of an essential geometric morphism between them. This

morphism is the categorical "bridge" that translates complexity properties back and forth between the finite and asymptotic

worlds, and its three-adjoint structure precisely encodes the relationships between finite computation and asymptotic

complexity theory.

Theorem 5.1 (Essential Geometric Morphism)

There exists an essential geometric morphism (an adjoint triple):

f! ⊣ f* ⊣ f*: Sh(Fin) → Sh(ℕ)

with functors explicitly given by:

f!(F) = colimn F(n) (left adjoint: takes finite sheaf to its asymptotic colimit, extending finite data to a

constant sheaf on ℕ)

f*(G) = G|Fin (inverse image: restricts an asymptotic sheaf to its values on finite sets)

f*(F) = limn F(n) (direct image: takes finite sheaf to its limit, encoding the "tail" behavior)

Proof (Verification of Adjunctions)

We must verify two adjunctions: f! ⊣ f* and f* ⊣ f* .

f! ⊣f ∗ F ∈Sh(Fin) G ∈Sh(N)

Adjunction : For and , we claim:

HomSh(N)(f!F, G) ≅HomSh(Fin)(F, f ∗G)

A natural transformation consists of maps for each , compatible

α: f!F →G αn: (f!F)(n) →G(n) n ∈N

with the -restriction maps. Since , by the universal property of colimits (see Mac Lane–

N f!F = colimn F(n)

Moerdijk [7] §III.3), a map out of into G(n) corresponds uniquely to a compatible cocone: a

colimnF(n)

family of maps for all k, natural in n . This is precisely the data of a natural transformation

F(k) →G(n)

β: F →f ∗G = G|Fin

, establishing the adjunction bijection. Naturality in F and G follows from the

universal property of the colimit.

f ∗⊣f∗ G ∈Sh(N) F ∈Sh(Fin)

Adjunction : For and :

HomSh(Fin)(f ∗G, F) ≅HomSh(N)(G, f∗F)

γ: f ∗G →F γm: G(m) →F(m)

A natural transformation is a family of maps for finite m , compatible

with restrictions. This corresponds to a natural transformation : a map from G(n)

δ: G →f∗F = limnF(n)

into the inverse limit of F , which by the universal property of limits (Mac Lane–Moerdijk [7] §III.3)

corresponds uniquely to a compatible cone — a collection of maps for all , natural in

G(n) →F(m) m ≥n

n . The restriction maps of G and the limit structure of f_* F ensure this bijection is natural in both arguments,

establishing the second adjunction. The sheaf conditions on F and G are preserved since colimits and limits of

□

sheaves along the inclusion are computed levelwise and satisfy gluing.

Fin ↪N

The essential geometric morphism is not merely abstract — it carries precise information about how complexity classes

transform. The next theorem shows that NP-hard problems in the asymptotic world become tractable in the finite world (f*

collapses hardness), while P-algorithms extend to the asymptotic regime (f* preserves tractability). This is the categorical

formalization of the empirical fact that engineering heuristics succeed on bounded instances.

5.2 Complexity Class Transfer

Theorem 5.2 (Complexity Transfer)

The geometric morphism transfers complexity classes as follows:

f*(NPℕ) = Polylarge, Fin ⊂ PFin

f*(PFin) = Pℕ ⊂ NPℕ

The first equation says that pulling an NP problem from the asymptotic world to the finite world places it in P (it

becomes polynomial). The second says that pushing a P problem from the finite world to the asymptotic world

keeps it in P (trivially).

Proof

For f* (NPℕ → PFin): Let L ∈ NP_ℕ with a verifier V running in time nk . Consider its restriction f* L to

inputs of size at most m (for any fixed finite bound m ). For such inputs, the witness length is at most m^k .

The number of possible witnesses is at most 2mk , which is a fixed finite constant. Exhaustive search over all

witnesses takes time O(2mk · m^k) = O(1) (constant in the finite domain, since m is fixed). Therefore f* L ∈

PFin .

This argument reveals the essence of the finite-asymptotic duality: exponential time means exponential in the

input size. When the input size is bounded, the exponential becomes a constant, collapsing the P/NP distinction.

For f* (PFin → Pℕ): Let L ∈ PFin with constant-time algorithm A (which runs in time O(1) ≤ O(n^0) ). Then

f* L(n) = L for all n : the direct image sheaf assigns the same constant-time algorithm at every asymptotic

stage. Constant time is in particular polynomial time O(n^0) , so f* L ∈ P_ℕ ⊆ NP_ℕ.

Corollary 5.3 (No Complexity Collapse Under f*)

The inverse image functor f* does not preserve NP-hardness: problems that are NP-hard in Sh(ℕ) (hard for

arbitrarily large inputs) become tractable (even trivial) when restricted to Sh(Fin) (fixed-size inputs).

Conversely, the direct image f* does not "create" hardness: P problems remain in P under pushing forward to

Sh(ℕ) .

This explains a key empirical observation: NP-hard problems are often solvable in practice for the instance sizes

encountered (which are finite and relatively small), while their asymptotic hardness is a property of the

mathematical limit, not of any physically realizable computation.

6. The Myriad Decomposition

6.1 Sheaf-Theoretic Decomposition

BACKGROUND: THE ČECH NERVE AND ČECH COMPLEX

Given a topological space X and an open cover 𝒰 = {Ui}i ∈ I , the Čech nerve N(𝒰) is the simplicial complex

with:

0-simplices (vertices): the open sets Ui

1-simplices (edges): pairs (Ui, Uj) with Ui ∩ Uj ≠ ∅

k-simplices: tuples (Ui_0, …, Ui_k) with Ui_0 ∩ ⋯ ∩ Ui_k ≠ ∅

The Čech complex of a sheaf ℱ with respect to the cover 𝒰 is the cochain complex:

Č0(ℱ) → Č1(ℱ) → Č2(ℱ) → ···

where ČC^k(𝒰, ℱ) = \prodi_0 < ⋯ < i_k ℱ(Ui_0 ∩ ⋯ ∩ Ui_k) , with coboundary maps defined by alternating

restriction maps. The Čech cohomology Hk(𝒰, ℱ) is the cohomology of this complex. By the Leray theorem, under

suitable acyclicity conditions on the cover, Čech cohomology agrees with sheaf cohomology.

In the complexity context: The "cover" is a decomposition of a hard problem into tractable local pieces {Ui} (local

constraint satisfaction problems), and the Čech complex computes the global solution space from local solution sets.

The number of simplices in the Čech nerve grows with the complexity of the overlap structure, and this growth rate

determines whether global assembly is polynomial or exponential.

Theorem 6.1 (Myriad Decomposition)

Let X be an NP optimization problem with solution space 𝒮 and objective f: 𝒮 → ℝ. There exists a site (C, J)

and sheaf ℱ ∈ Sh(C, J) such that the global solution space is the limit (equalizer) of the Čech diagram:

ℱ(X) ≅ eq(∏

i ∈ I ℱ(Ui) ⇉ ∏

i,j ∈ I ℱ(Uij) ⇉ ∏

i,j,k ∈ I ℱ(Uijk))

where:

Ui are local constraint regions (clauses, subgraphs, variable subsets)

Uij = Ui ∩ Uj are pairwise overlaps

Uijk = Ui ∩ Uj ∩ Uk are triple overlaps

each ℱ(Ui) is computable in polynomial time (local tractability)

The "myriad" name reflects the (potentially many) local pieces that together constitute the full problem.

Proof

Decompose the problem X into constraint satisfaction. Define:

C = the category of partial variable assignments (objects: subsets of variables; morphisms: extensions of

assignments)

Ui = local constraint regions: for SAT, Ui is the set of variables appearing in clause i ; for graph

coloring, Ui is a small induced subgraph; for TSP, Ui is a local sub-tour

ℱ(Ui) = the set of locally satisfying assignments to the variables/constraints in Ui

Each ℱ(Ui) involves only the variables in Ui , which is a fixed, bounded set (e.g., the variables in a single

clause, or the vertices in a bounded subgraph). Checking all assignments to a bounded set of variables takes time

polynomial in the total instance size (constant times per local check, polynomial number of local checks). Thus

ℱ(Ui) ∈ P .

The global solution space ℱ(X) is the set of globally consistent assignments — those that extend every local

solution compatibly across all overlaps. This is precisely the equalizer of the two restriction maps in the Čech

∏i F(Ui)

diagram: sections in that agree on all pairwise overlaps Uij . The Čech nerve of the cover encodes

all overlap data, and the equalizer is the limit of this diagram. By the sheaf axiom, ℱ(X) ≅ the equalizer (since

ℱ is a sheaf on the covering {Ui → X} ).

BACKGROUND: A CONCRETE MYRIAD DECOMPOSITION FOR 3-SAT

Consider a 3-SAT formula φ with m clauses and n variables. The myriad decomposition proceeds as:

Local pieces: For each clause C_i = (ℓi1 ∨ ℓi2 ∨ ℓi3) involving variables {vi1, vi2, vi3} , define ℱ(Ui) =

{(b_1, b_2, b_3) ∈ {0,1}^3 : ℓi1(b_1) ∨ ℓi2(b_2) ∨ ℓi3(b_3) = 1} . This has |ℱ(Ui)| = 7 elements (all

satisfying truth assignments to 3 variables), computable in O(1) time.

Overlaps: Clauses sharing variables create overlap constraints: if Ui ∩ Uj = {v} (one shared variable), the

overlap section ℱ(Uij) must assign the same value to v in both local solutions.

Global solution: A global satisfying assignment is a section of ℱ over all of φ — an element of the

equalizer, assigning values to all variables consistently across all clauses.

Complexity source: The number of overlap constraints is O(m^2) (polynomial), but the number of ways to

satisfy them globally is exponential in the number of connected components of the variable-clause incidence

graph — which, for random 3-SAT at the phase transition, is exponential in n .

The myriad decomposition reduces complexity analysis to the study of the covering index set I . We now identify the

precise topological invariant that determines complexity class: the growth rate of |I| , characterized by the cohomological

dimension of the Čech nerve. This dichotomy between polynomial and exponential growth is the sheaf-theoretic counterpart

of the P vs NP distinction.

6.2 The Growth Dichotomy

Definition 6.2 (Myriad Growth)

The myriad index I is the index set of local constraint regions in the Čech cover of problem X . Its growth

characterizes complexity:

Polynomial growth: |I| = O(nk) for fixed k — the number of local pieces grows polynomially in the input

size. This corresponds to problems with bounded treewidth, planarity, or other structural restrictions enabling

efficient decomposition.

Exponential growth: |I| = 2O(n) — the number of local pieces grows exponentially. This is the generic

case for NP-hard problems without special structure.

Theorem 6.3 (Complexity from Cohomology)

The time complexity of computing the global section ℱ(X) via the Čech equalizer is:

dim N |Nk| · cost(ℱ(Ui0···ik)))

Time(ℱ(X)) = Θ(∑

k=0

where Nk is the k-skeleton of the Čech nerve. Two key cases:

If H̃ k(X; ℱ) = 0 for all k > d (cohomology vanishes above degree d ) and |I| = poly(n) , then Time =

poly(n) — the problem is in P.

If H̃ k(X; ℱ) ≠ 0 for k = O(n) (non-trivial cohomology in high degrees) and |I| = 2O(n) , then Time =

2O(n) — the problem is outside P (in NP or harder).

Proof

The equalizer computation traverses the Čech nerve, processing all simplices. The cost of processing a k-simplex

Ui_0 ⋯ i_k is cost(ℱ(Ui_0 ⋯ i_k)) , which is polynomial by local tractability. The total cost is the sum over all

simplices of the nerve.

When cohomology vanishes above degree d , the Leray spectral sequence associated to the Čech cover

collapses at the Ed+1 page (Definition: a spectral sequence Erp,q is a collection of abelian groups with

differentials dr: Erp,q → Erp+r, q-r+1 ; it collapses at page if all differentials dr = 0 for r ≥ r_0 ). Collapse

r0

means the cohomological computation terminates at depth d . The Čech nerve has at most |I|d+1 simplices of

dimension ≤ d ; if |I| = poly(n) , the total count is polynomial, giving Time = poly(n) .

When cohomology is non-trivial for k = O(n) , the spectral sequence does not collapse early, and the Čech nerve

must contain simplices of dimension O(n) . With |I| ≥ 2 local pieces, the number of k-simplices is at least

, which for k = O(n) is at least exponential in n . Thus Time ≥ 2O(n) .

( |I|

k+1)

BACKGROUND: SPECTRAL SEQUENCES

A spectral sequence is an algebraic computational device for iteratively approximating cohomology groups. It

consists of a sequence of pages E_0, E_1, E2, … , where each page Er is a bigraded abelian group (or module)

equipped with a differential dr of bidegree (r, 1-r) . The next page is the cohomology of the current page: Er+1 =

H(Er, dr) . Under suitable convergence conditions, the sequence converges to the cohomology of the total complex:

E_∞ ≅ Gr(H*(X; ℱ)) .

The Grothendieck spectral sequence [10], [12], [13] arises when computing the derived functors of a composite R(F ∘

p,q = RpF(RqG(A)) and converges to Rp+q(F ∘ G)(A) . In the myriad decomposition

G) ; it has E2 page E2

context, the spectral sequence computes the global complexity from local complexity data (on the E2 page) through

a series of correction terms (higher differentials). The collapse condition means that no higher corrections are needed,

signaling that local-to-global assembly is polynomially efficient.

Concretely: if the Čech-to-derived spectral sequence collapses at page E2 , then Hk(X; ℱ) ≅ Hk(ČC(𝒰, ℱ)) —

Čech cohomology directly computes sheaf cohomology without correction. For problems with bounded treewidth or

planarity, this collapse occurs at E2 or E_3 , explaining their polynomial-time solvability.

Corollary 6.4 (Topological Phase Transition)

The complexity class of a problem is determined by the topology of its Čech nerve:

Polynomial (P-class): Problems with bounded treewidth (constraint graph is tree-like), planarity (constraint

graph embeds in the plane without crossings), or finite cohomological dimension ( Hk = 0 for large k) have

polynomial myriads. Their Čech nerves are topologically simple — few and bounded simplices — enabling

efficient global assembly.

Exponential (NP-class): Problems with unbounded constraint graph complexity, non-planar structure, or

non-vanishing cohomology in arbitrarily high degrees have exponential myriads. Their Čech nerves are

topologically complex — many high-dimensional simplices encoding global constraints that cannot be

resolved locally.

BACKGROUND: TREEWIDTH AND PLANARITY IN COMPLEXITY

The treewidth of a graph G is the minimum width of a tree decomposition — a tree-like arrangement of overlapping

cliques covering G . Graphs with treewidth k generalize trees (treewidth 1) and series-parallel graphs (treewidth 2).

By Courcelle's theorem, every graph property definable in monadic second-order logic can be decided in linear time

on graphs of bounded treewidth.

In sheaf-theoretic terms, bounded treewidth corresponds to a cover whose Čech nerve is a tree or tree-like structure

(few and simple simplices). The associated cohomology is trivial above degree 1, causing the spectral sequence to

collapse at E2 . The polynomial complexity of treewidth-bounded problems is thus a consequence of cohomological

triviality.

Planarity: By the Robertson-Seymour theorem and related results, planar graphs have bounded genus, which limits

the cohomological complexity of the Čech nerve to finitely many non-trivial degrees. Problems on planar graphs (such

as planar 3-colorability, solvable in polynomial time) correspondingly have polynomially many Čech simplices.

The topological phase transition occurs at the boundary between bounded and unbounded cohomological dimension

— precisely where the complexity class of the problem changes from P to NP. This is the geometric heart of the P vs

NP problem: it is a question about the topology of the solution space sheaf.

6.3 Geometric Classification of NP-Hardness

The myriad decomposition framework yields a precise, purely geometric classification of NP-hardness. The following

theorem consolidates the growth dichotomy into a single statement verifiable structurally, without appeal to any algorithm.

Theorem 6.5 (Geometric Classification of NP-Hardness)

Let X be a computational problem with sheaf representation ℱ over site (C, J). Define the nerve complexity

invariant:

κ(X) = limn → ∞ log|In| / log n

where I_n is the myriad index for instances of size n . Then:

Case A (Polynomial Myriad — P): If κ(X) < ∞ and H̃ j(N(𝒰); ℱ) = 0 for j > d = O(1) , then X ∈ P .

Time bound: O(|I|d+1) = poly(n) .

Case B (Exponential Myriad — NP): If |I| = 2Ω(n) , or H̃ j(N(𝒰); ℱ) ≠ 0 for j = Ω(n) , then X ∉ P

(in Sh(ℕ) ). Time bound: Ω(2n) .

X ∈ P ⟺ κ(X) < ∞ and cdim(ℱ) < ∞

Proof

Case A: With |I| = O(nk) and cohomological dimension d , the Čech nerve has at most |I|d+1 = O(nk(d+1))

non-degenerate simplices. Each is P-computable. The Leray spectral sequence for the cover converges to H*(X;

ℱ) and collapses at Ed+1 (no higher-order corrections). Equalizer computation terminates in polynomial time.

Case B: Non-vanishing cohomology for j = Ω(n) forces the nerve to contain Ω(n) -dimensional simplices.

With |I| ≥ 2 , the binomial count gives at least 2Ω(n) simplices — exponential time required.

The invariant κ(X) is intrinsic to the solution sheaf and is preserved under polynomial-time reductions, making

the classification reduction-stable.

BACKGROUND: GEOMETRIC CLASSIFICATION TABLE

Problem Structure κ(X) cdim(ℱ) Class

2-SAT Implication graph acyclic 1 1 P

Bounded treewidth CSP Tree decomposition, bag ≤ k 1 1 P

Planar 3-coloring Genus 0, finite Euler char 1 2 P

3-SAT (generic) Cyclic constraint graph ∞ Ω(n) NP-complete

Metric TSP Complete constraint graph ∞ Ω(n) NP-hard

Max-Cut (planar) Planar, genus 0 1 2 P

6.4 The Approximate Myriad Framework and Universal Approximators

The exact myriad equalizer is exponential in the worst case. A powerful approximation theory emerges by relaxing

exactness — connecting the sheaf framework to modern large-scale learning systems.

Definition 6.6 (δ-Compatible Section and Approximate Equalizer)

For tolerance δ > 0 , a δ-compatible section is a family (si)i ∈ I with si ∈ ℱ(Ui) satisfying the relaxed gluing

condition:

ℱδ(X) = {(si) ∈ ∏i ℱ(Ui) : ‖si|Uij − sj|Uij‖ < δ ∀i,j}

ℱ_δ(X) is non-empty for any δ > 0 and computable in polynomial time per local check. As δ → 0 , it

converges to the exact equalizer ℱ(X) .

Theorem 6.7 (Hierarchical Universal Approximation for NP)

For any NP optimization problem X with bounded Lipschitz objective f: 𝒮 → [0, M] and any ε, δ > 0 , there

exists an orchestrator implemented as a Mixture-of-Experts network:

K πφ(x, k) · Ek(x)

𝒪θ(x) = ∑k=1

where π_φ is a gating network and E_k are expert networks approximating local kernels, satisfying:

Px∼𝒟[|f(𝒪θ(x)) − f*(x)| < ε] > 1 − δ

provided depth(𝒪) = Ω(d̄ ) (average solution depth), K = Ω(|I|) experts, and sufficient training samples from

𝒟.

Proof Sketch

Each expert E_k approximates ℱ(Ui_k) via the Universal Approximation Theorem — valid since each local

solution space is compact and finite. The gating network π_φ learns to route x to relevant experts by the MoE-

UAT. Composition approximates the exact equalizer up to O(K-1/2) from expert error and O(Nsamples-1/2)

from training. The depth condition ensures the network can simulate the sequential assembly of a global section

— each layer corresponds to one sheaf restriction level.

Definition 6.8 (Average Solution Depth and Capacity Requirements)

Let X be an NP optimization problem with solution space , data distribution over instances, and myriad

S D

{Ui}i∈I x ∈S

decomposition . For each instance , let T(x) denote the dependency tree of the optimal

solution s^*(x) — the directed acyclic graph encoding which local sub-problems must be resolved in which

order to construct the global solution. The height of the dependency tree h(T(x)) is the length of the longest

path from root to leaf in T(x) .

The average solution depth is:

d̄ = 𝔼x ∼ 𝒟[h(T(x))]

where is the input distribution, denotes expectation, and h(T(x)) is the height of the optimal

D E[⋅]

¯d

dependency tree for instance x . This depth determines the required capacity of an orchestrator network

approximating the myriad equalizer:

Average

Depth d̄ Required Resources Approximability Class

O(1) Constant depth network Exact algorithm in P

FPTAS (Fully Polynomial-Time

O(log n) Poly-depth, poly samples

Approximation Scheme) exists

O(nα) , α <

PTAS (Polynomial-Time Approximation

Sub-exponential depth

Scheme) exists

1

Exponential depth, or large MoE with

APX-hard generally (no PTAS unless

O(n)

K = 2^{O(n)} experts

P=NP)

Here n = |X| is the input size, is a sub-linear exponent, MoE = Mixture of Experts (the orchestrator

α ∈(0, 1)

architecture of Theorem 6.7), and FPTAS/PTAS/APX-hard refer to approximability in the classical complexity

¯d depth(O) = Ω( ¯d)

sense [1]. The depth directly controls the required number of network layers: .

BACKGROUND: MUZERO AS MYRIAD ORCHESTRATOR

MuZero (Schrittwieser et al. 2020) solves Go — a PSPACE-complete problem with state space ≈ 10172 — through

a hierarchical architecture that directly instantiates the approximate myriad framework:

Local kernels (Myriad): Life-death patterns, joseki, local tactical sequences — P-computable pattern matching.

Each corresponds to a local sheaf section ℱ(Ui) .

Dynamics model (Restriction maps): The learned world model predicts next-state from action, implementing

sheaf restriction maps ρUi, Uij in learned latent space.

MCTS (Approximate equalizer): Monte Carlo Tree Search samples from the global solution sheaf. The policy

network acts as gating π_φ ; the value network is the expert evaluator. Each rollout tests a δ-compatible section

candidate.

Move selection (Global section): Assembles local evaluations into a global decision — the approximate

equalizer output.

MuZero's approximation error scales as O(simulations-1/2) , matching the O(Nsamples

-1/2) bound of Theorem 6.7.

Go's local pattern structure gives a small effective cohomological dimension per region, enabling a polynomial-

parameter learned representation of an exponential state space. This is the myriad framework in action: exponential-

looking problem, polynomial effective boundary.

6.5 Comparison with Parameterized Complexity

The myriad decomposition framework did not emerge in a vacuum. The central insight — that NP problems decompose into

local polynomial sub-problems with global assembly as the source of hardness — is shared by the theory of parameterized

complexity, developed systematically by Downey and Fellows [42]. This section makes the relationship explicit and precise,

identifying both where the two frameworks agree and where they diverge.

Treewidth and Bounded-Treewidth CSP

A tree decomposition of a graph G = (V, E) is a tree T whose nodes are labeled by subsets (bags) , with the

Bt ⊆V

properties that every vertex appears in some bag, every edge has both endpoints in some bag, and the bags containing any

fixed vertex form a connected subtree. The treewidth tw(G) is the minimum over all tree decompositions of the

maximum bag size minus one. Small treewidth captures "nearly tree-like" structure.

Courcelle's theorem [43] is the flagship result of this area: every graph property expressible in monadic second-order logic

(MSO₂) is decidable in linear time on graphs of bounded treewidth. Many NP-complete problems on general graphs (graph

coloring, Hamiltonian path, independent set) become linear-time on bounded-treewidth graphs. The algorithmic mechanism

is exactly the myriad decomposition: the tree decomposition provides a cover of the graph by small bags (the Ui ), each

sub-problem on a bag is polynomial (indeed linear) time, and the tree structure ensures the Čech nerve is a tree — hence

H^k = 0 for all (trees are contractible). By Theorem 6.5 Case A, this is exactly the polynomial-myriad condition.

k ≥1

Theorem 6.9 (Myriad–Treewidth Correspondence)

Let X be a constraint satisfaction problem (CSP) on graph G with treewidth (fixed). Then:

tw(G) ≤k

1. The tree decomposition of G gives a natural myriad cover indexed by tree nodes, with

{Ut}t∈T

(polynomial).

|I| = |T| ≤|V |

2. Each local sub-problem is solvable in time O(k^k) (exponential in k, but polynomial for fixed k).

F(Ut)

H j(N (U); F) = 0

3. The Čech nerve of the tree decomposition cover is a tree, hence contractible: for all

.

j ≥1

X ∈P O(kk ⋅|V |)

4. By Theorem 6.5, for fixed k. Total time: — matching the best known FPT

algorithms [42].

Conversely, if tw(G) is unbounded (grows with |V| ), then the Čech nerve of the natural myriad cover has

growing cohomological dimension, and Theorem 6.5 Case B applies: X is likely NP-hard.

Proof sketch

For (1)–(2): standard tree decomposition algorithm (see Bodlaender [44]). For (3): the Čech nerve of a tree cover

is the tree itself (since the only non-empty intersections are pairs for adjacent tree nodes, and higher

Ut ∩Ut′

intersections are empty by the tree property). Contractibility of trees gives the vanishing cohomology. For (4):

apply the dynamic programming algorithm along the tree, which corresponds exactly to computing global sections

□

F

of via the sheaf-gluing property along the tree nerve.

FPT and the Myriad Invariant