Architecture is Compiling: A high-science essay on architecture as the material compilation of constraints, affordances, memory, and executable futures

Abstract

This essay argues that architecture can be understood as a form of compiling: a process by which distributed information, selective pressures, developmental rules, and control policies are translated into stable arrangements of matter that pre-compute future behavior. The proposal is disciplined rather than merely metaphorical. Dawkins’s extended phenotype provides the evolutionary frame, but also sets an important limit: not every human building is automatically an extended phenotype in the strict sense. Thermodynamics of information supplies the physical frame, reminding us that information-processing must always be paid for in energy and embodied in substrate. Social-insect construction, stigmergy, active-inference accounts of niche construction, and multiscale competency models of biological agency supply the intermediate frame in which built structure becomes memory, instruction, and control surface. Within this synthesis, KEGGO OS can be read as an architecture of Gate Chemistry, Transfer Entropy, and Effective Temperature; Continuity Nodes become local compiler-runtime interfaces in which state, memory, and future possibility meet; CN-α cybernetic loops become world-writing cycles in which agents do not only sense and act, but deposit traces that alter the next perceptual field. From this vantage, AGI architecture is not a passive container for intelligence. It is on course to become an agent of itself: a self-editing, memory-bearing, embodiment-sensitive, environment-writing system that recursively redesigns the interface between artificial intelligence and artificial life.

Full Text

Architecture Is Compiling

Extended Phenotype, Thermodynamics of Information, KEGGO OS, Continuity Nodes, CN-α Cybernetic Loops, and AGI Architecture

Co-created by SignalSense / Continuity Nodes and OpenAI GPT 5.4 Thinking · March 2026

ABSTRACT

This essay argues that architecture can be understood as a form of compiling: a process by which distributed information, selective pressures, developmental rules, and control policies are translated into stable arrangements of matter that pre-compute future behavior. The proposal is disciplined rather than merely metaphorical. Dawkins’s extended phenotype provides the evolutionary frame, but also sets an important limit: not every human building is automatically an extended phenotype in the strict sense. Thermodynamics of information supplies the physical frame, reminding us that information-processing must always be paid for in energy and embodied in substrate. Social-insect construction, stigmergy, active-inference accounts of niche construction, and multiscale competency models of biological agency supply the intermediate frame in which built structure becomes memory, instruction, and control surface.

Within this synthesis, KEGGO OS can be read as an architecture of Gate Chemistry, Transfer Entropy, and Effective Temperature; Continuity Nodes become local compiler-runtime interfaces in which state, memory, and future possibility meet; CN-α cybernetic loops become world- writing cycles in which agents do not only sense and act, but deposit traces that alter the next perceptual field. From this vantage, AGI architecture is not a passive container for intelligence. It is on course to become an agent of itself: a self-editing, memory-bearing, embodiment- sensitive, environment-writing system that recursively redesigns the interface between artificial intelligence and artificial life.

Core proposition: architecture is compiling whenever a system converts distributed possibilities into a persistent arrangement of constraints, affordances, memory, and executable futures.

1. The thesis: from design metaphor to scientific operator

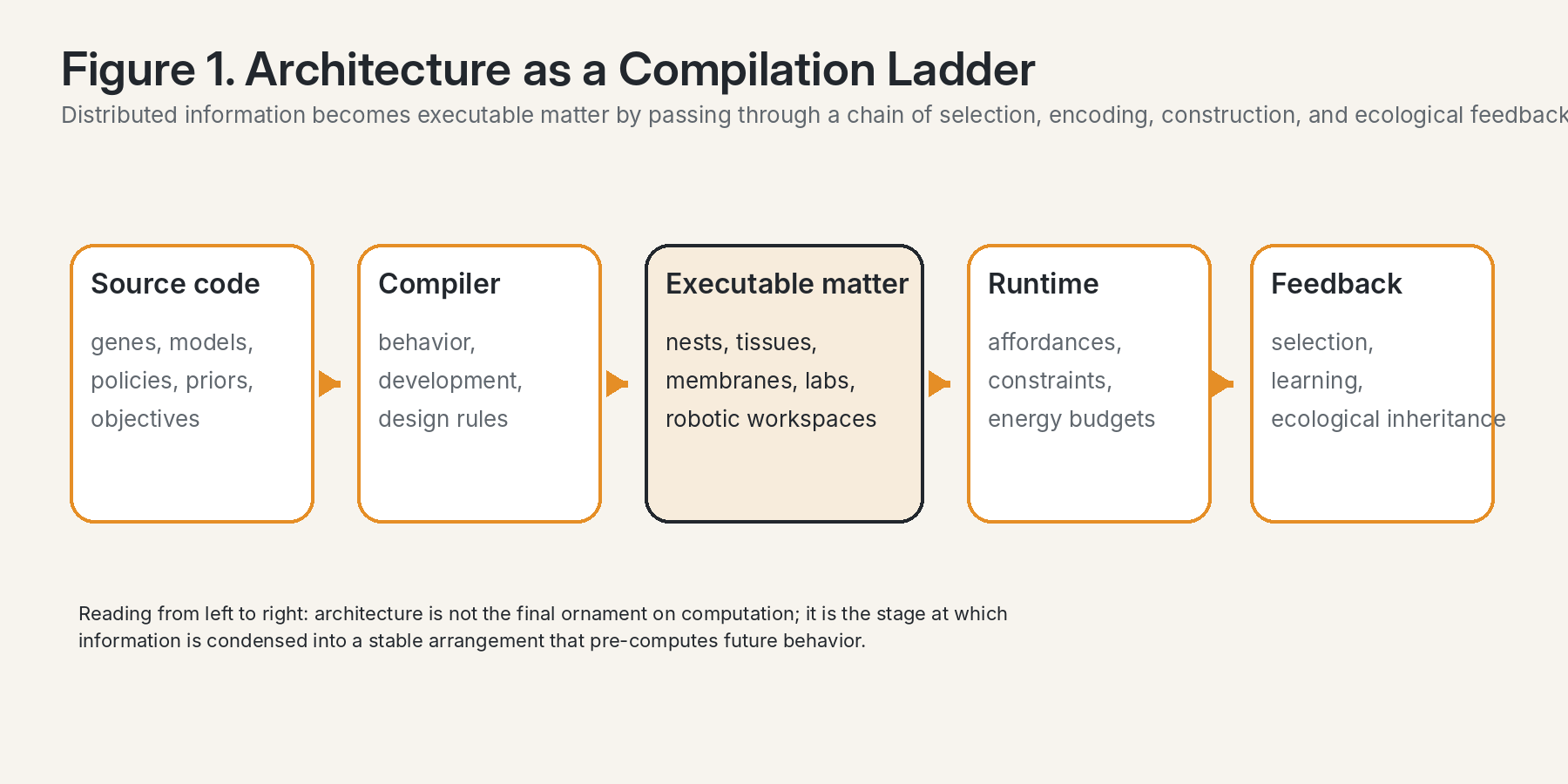

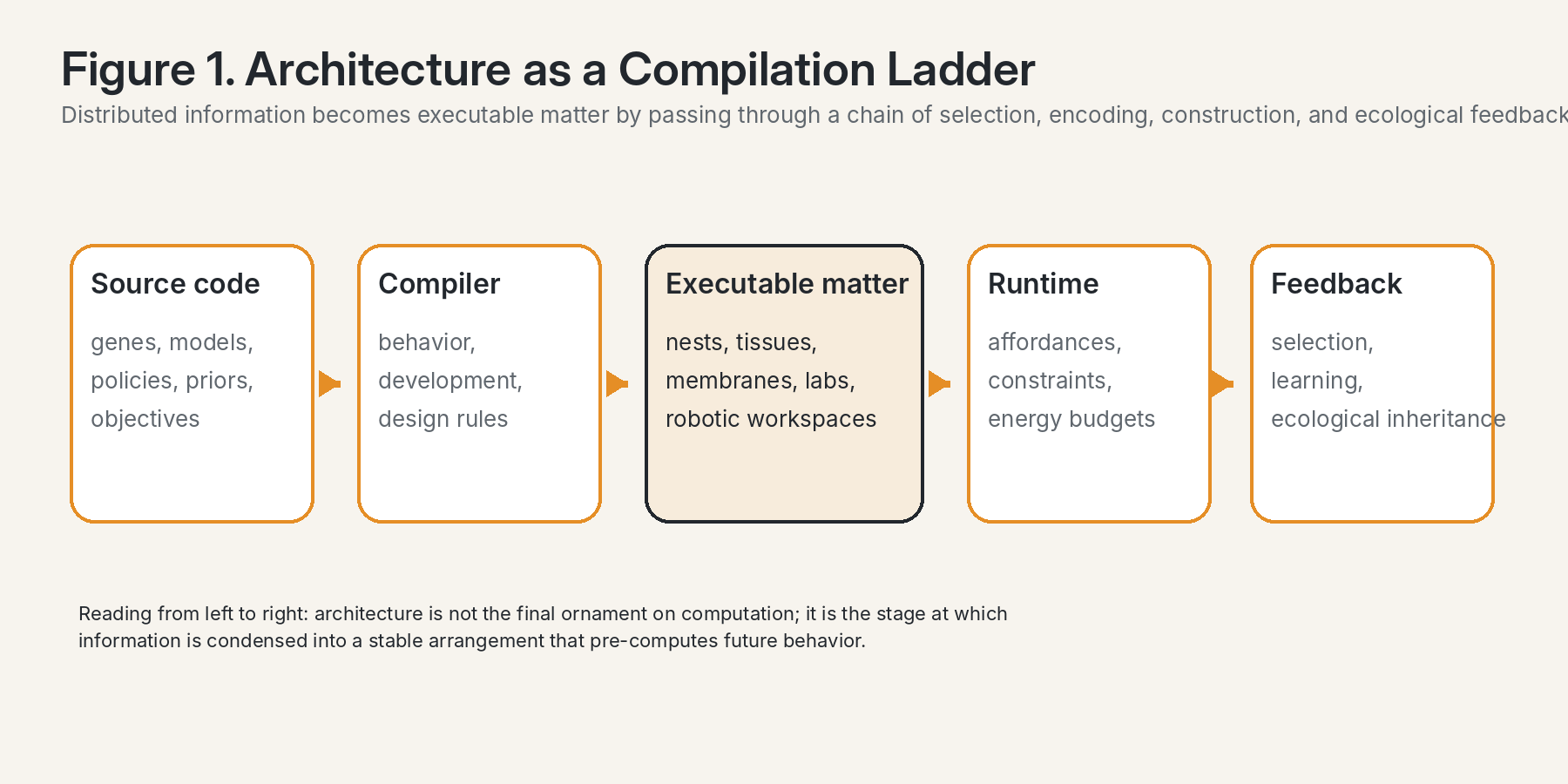

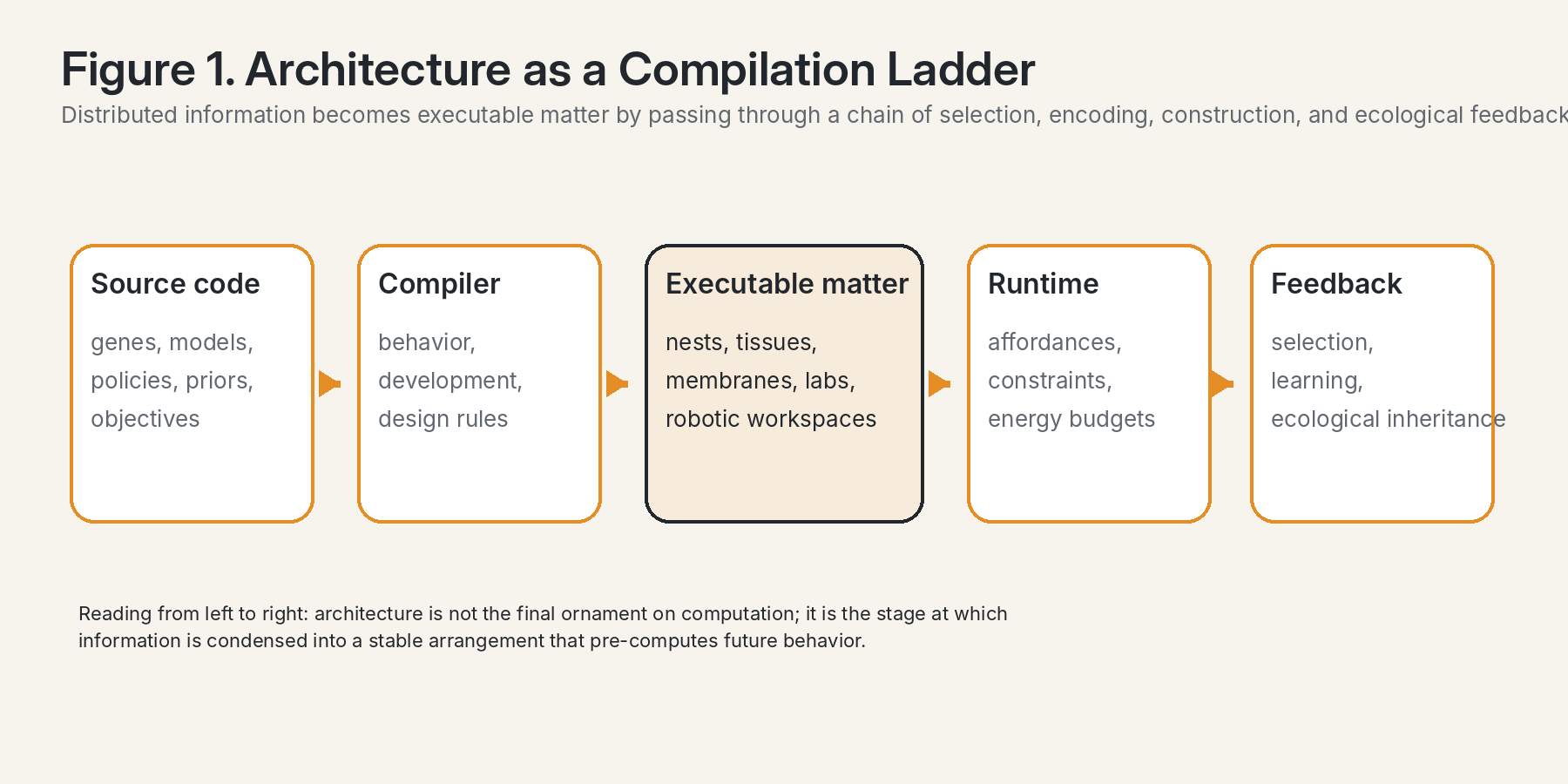

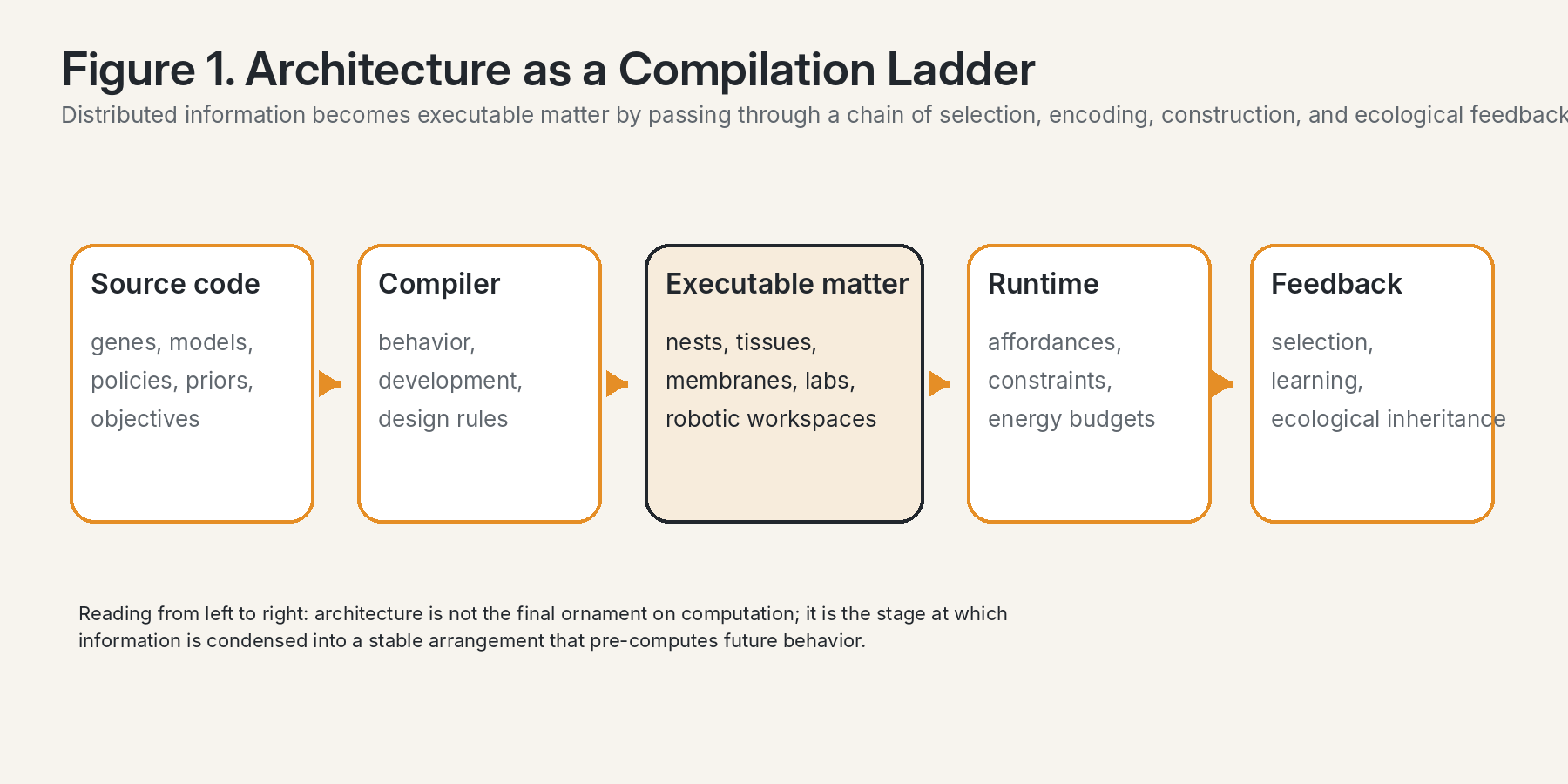

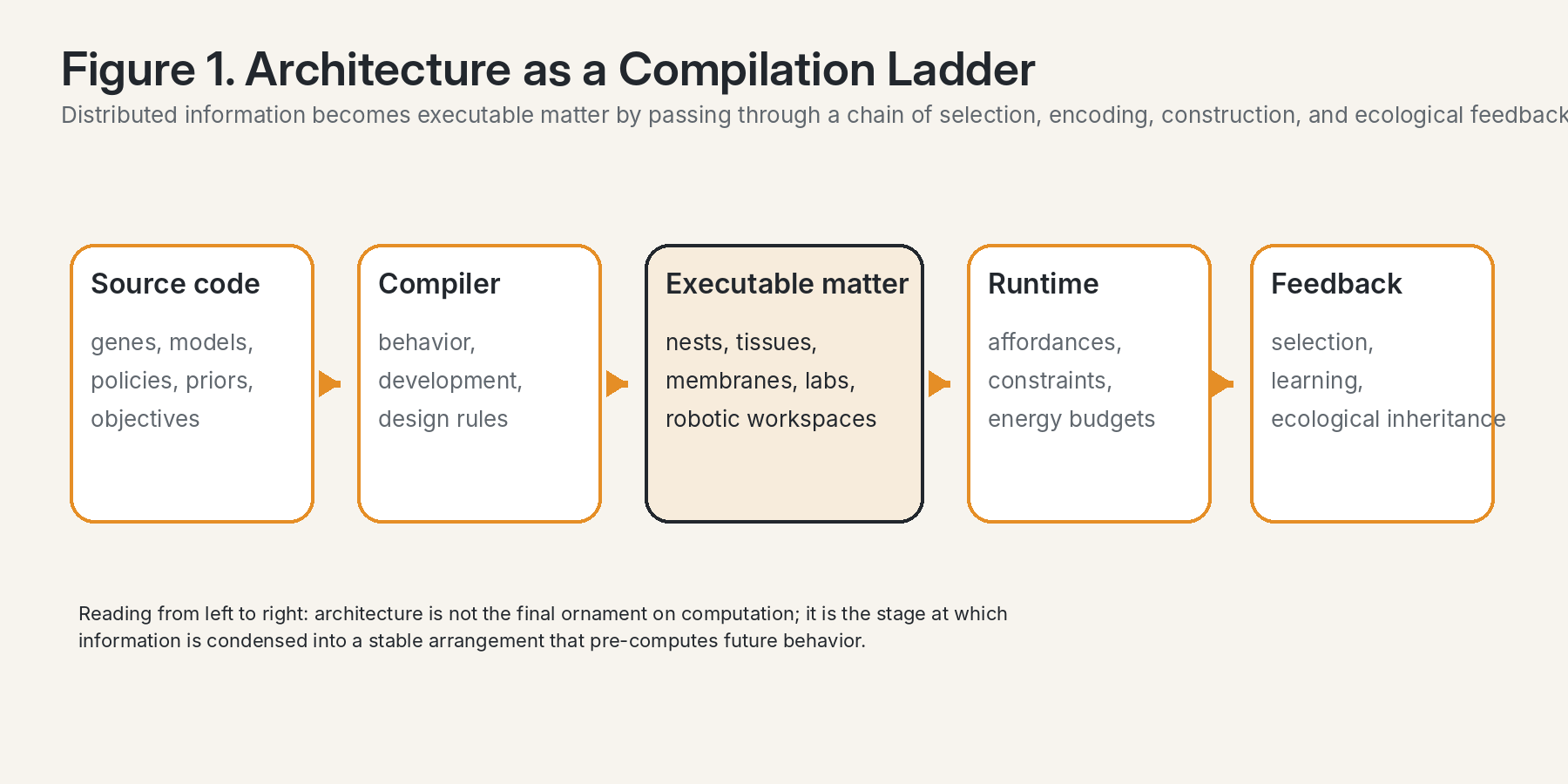

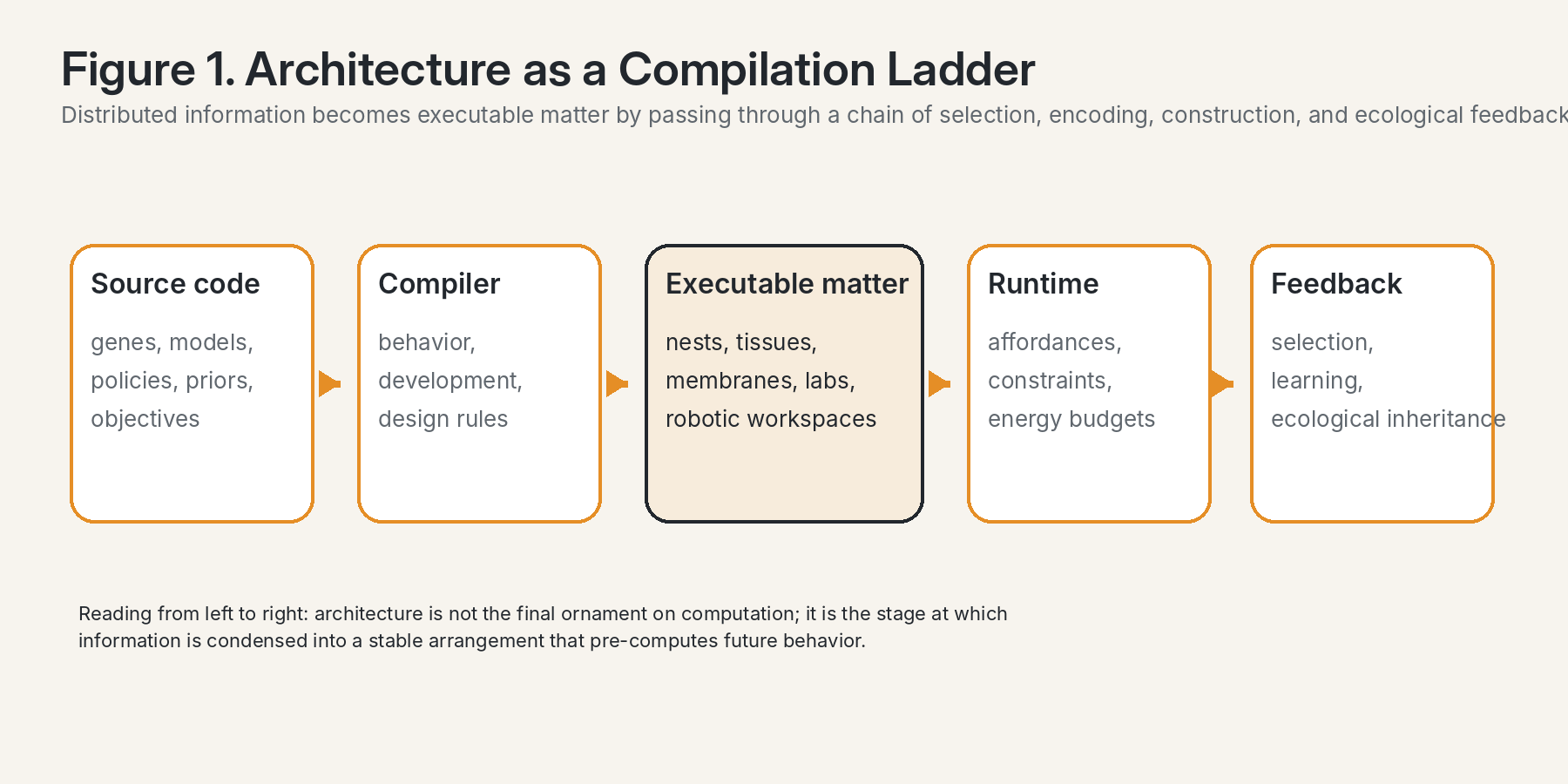

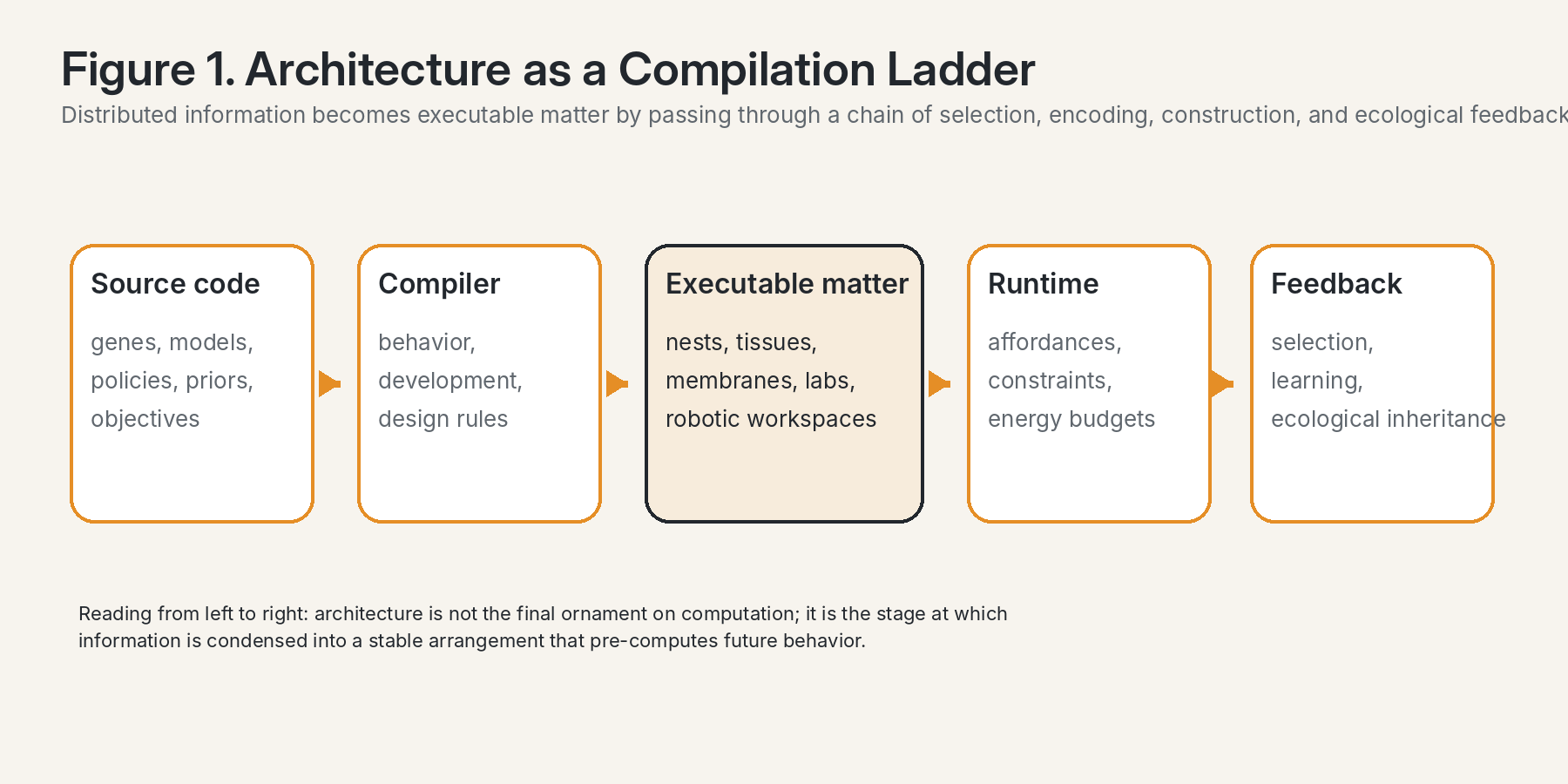

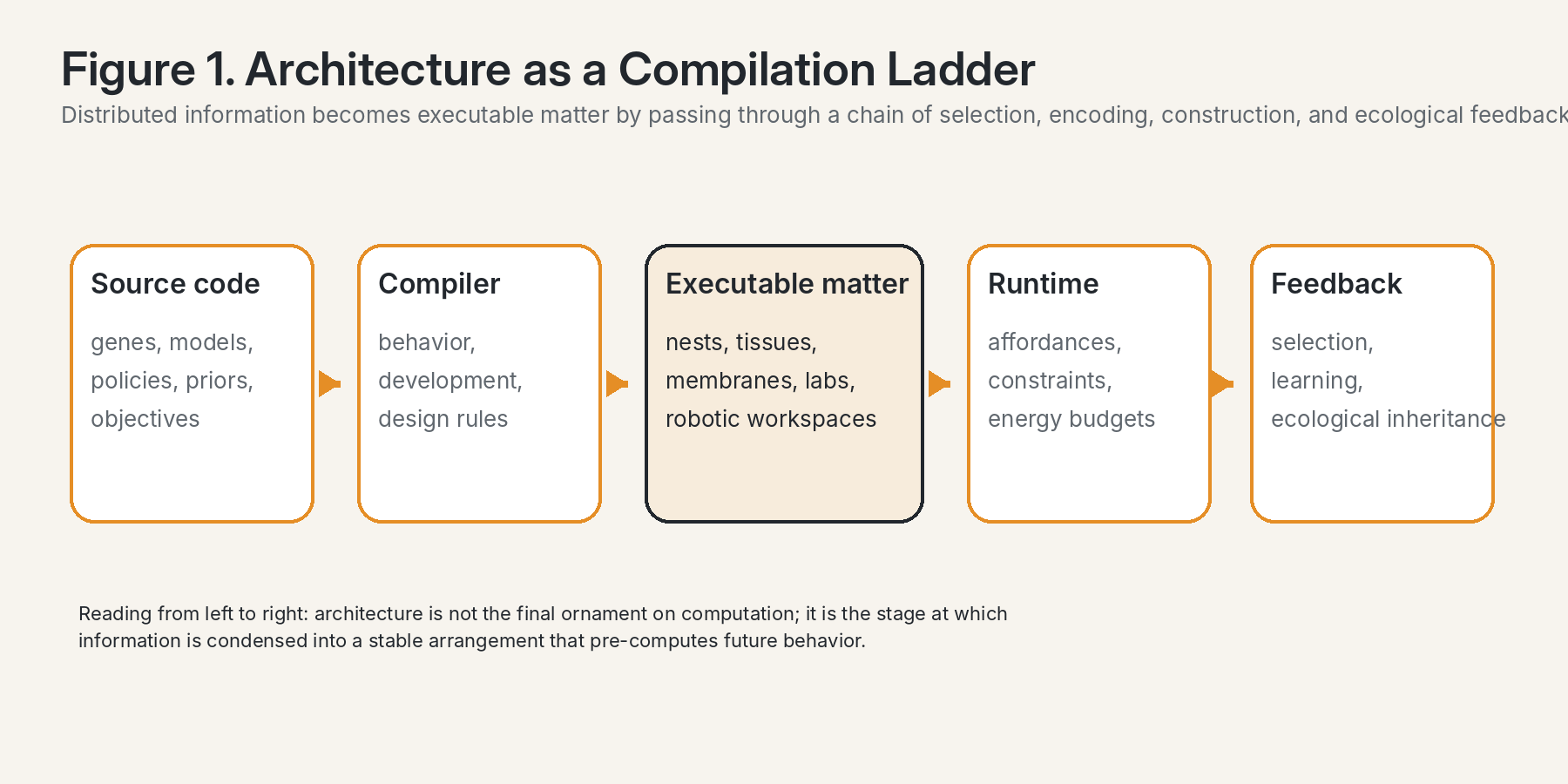

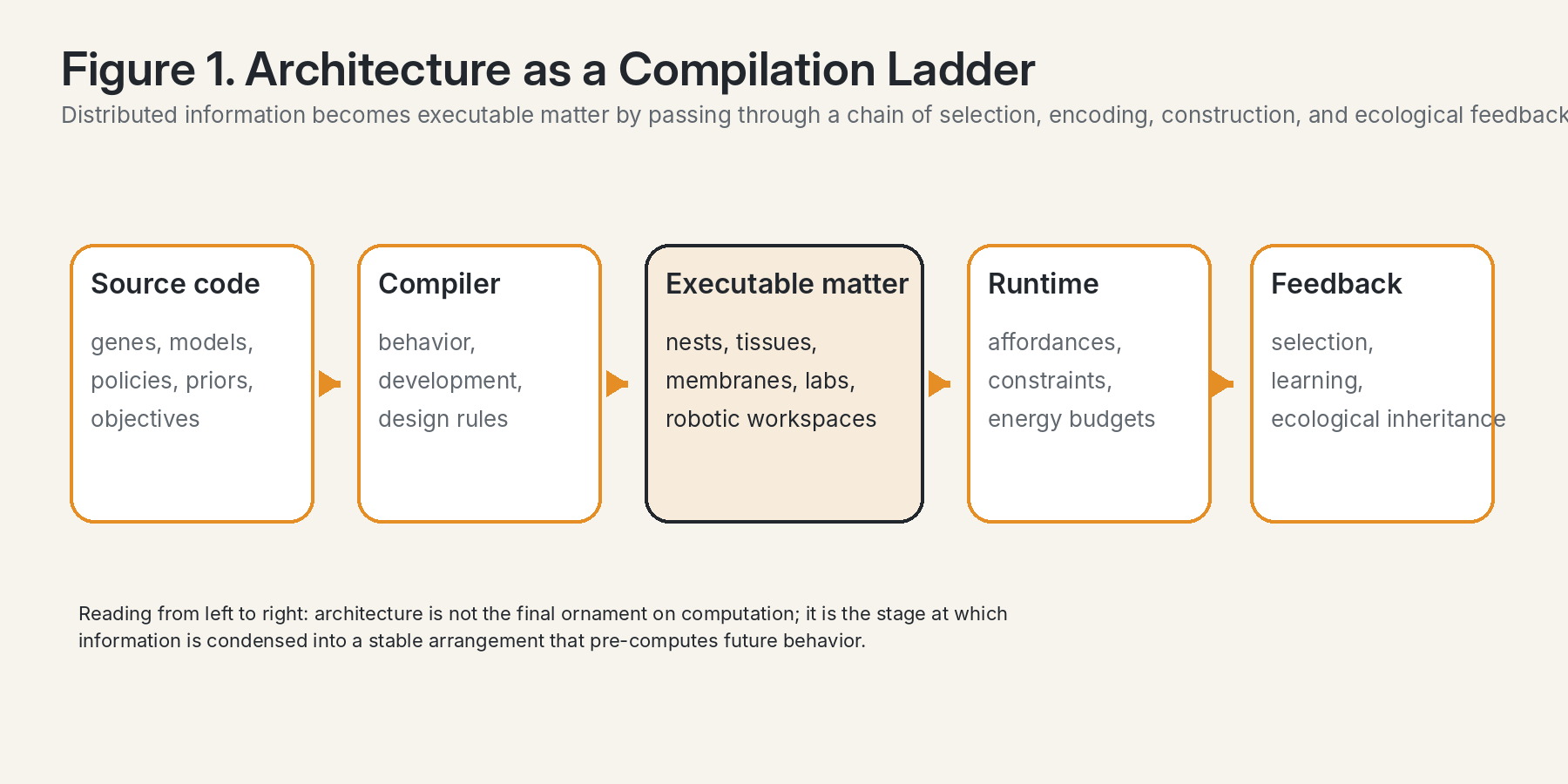

The phrase architecture is compiling becomes scientifically useful when it is taken to mean more than analogy. In computation, compilation translates a high-level description into an executable form under the constraints of a hardware substrate. In biology, cognition, and design, architecture does something strikingly similar. It takes distributed rules, inherited dispositions, energy budgets, developmental constraints, learned priors, and local interactions, and condenses them into a stable arrangement of matter that makes some future trajectories easier, some harder, and some altogether inaccessible. A wall, a membrane, a nest chamber, a tissue scaffold, a lab bench layout, a robotics workspace, a protocol stack, or a memory graph may all be understood as compiled structure: they do not merely host action; they partially pre-compute it.

This perspective matters because it moves architecture away from being treated as a late-stage wrapper around intelligence. Instead, architecture becomes one of the principal media through which intelligence is externalized, stabilized, and distributed across time. A compiled structure is a form of external memory and external control. It stores prior work in matter. It reduces the amount

of computation that must be performed online by shaping the landscape in which subsequent computation unfolds. In this sense, architecture is neither only form nor only shelter. It is a selective machine for futures.

Once the concept is sharpened this way, it becomes applicable across scales. Genes compile proteins and developmental cascades. Cells compile physiological gradients into tissues. Tissues compile local competencies into morphology. Organisms compile behavior into environmental traces. Human collectives compile institutions into cities, laboratories, and supply infrastructures. Contemporary AI systems compile prompts, memory policies, retrieval structures, tool graphs, and embodiment assumptions into software-and-hardware ecologies. The important point is not that all these systems are identical, but that they all exhibit the same formal operation: the translation of informational possibility into durable executable arrangement.

2. Dawkins, the extended phenotype, and the disciplined scope of the claim

Richard Dawkins’s proposal of the extended phenotype remains indispensable because it forced evolutionary thought to take seriously the fact that phenotypic effects do not stop at the skin. Beaver dams, bird nests, caddis houses, parasite-induced host behaviors, and other environment- shaping outputs can all count as phenotypic expressions insofar as they are consequences of heritable variation and can feed back into differential replication. Yet Dawkins’s framework is valuable not only because it broadens phenotype, but because it preserves a stringent criterion for when that broadening is warranted. Philip Hunter’s review captures the point crisply: a building is not automatically the extended phenotype of an architect, because the architect’s specific alleles are not more or less likely to be selected on the basis of that one building’s design [1].

That restriction is philosophically and scientifically productive. It prevents the extended phenotype from collapsing into a flattering label for any impressive artifact. The beaver dam and the human office tower are not equivalent simply because both are built. The first is tightly connected to recurrent heritable variation and reproductive consequences in an evolutionary lineage. The second usually belongs more to the domain of cultural inheritance, technical practice, or institutional design than to strict Dawkinsian selection. Hunter’s later review of the revival of the extended phenotype reinforces this point while also showing how the concept has become newly useful for ecology, agriculture, plant–soil systems, symbioses, and eco-evolutionary feedbacks [2].

This distinction allows the present thesis to become sharper rather than weaker. When we say architecture is compiling, we are not claiming that every human-made structure is, in the narrow sense, an extended phenotype. We are claiming that architecture is the material compilation of information into externalized control structure. Some compiled structures qualify as extended phenotypes. Others are better described as niche construction, ecological inheritance, cultural scaffolding, or technological ecosystems. The benefit of the distinction is that it lets the essay travel across biology, design, and AI without losing rigor.

SignalSense / Continuity Nodes · 3 · Co-created with OpenAI GPT 5.4 Thinking

3. Thermodynamics of information: why compiled architecture must be paid for in matter

The compilation perspective becomes physically serious when joined to the thermodynamics of information. Landauer’s principle, revisited sixty years later in a major Nature Reviews Physics overview, states that logically irreversible operations have a minimum thermodynamic cost: erasing one bit of information requires at least kBT ln2 of dissipation [3]. More broadly, information is never a free-floating abstraction. It is always embodied in some substrate, maintained by energy flows, and constrained by irreversibility. Any account of intelligence that ignores this remains incomplete.

Architecture enters here as one of the primary strategies by which systems move computation off the moment and into the world. A structure that channels flows, restricts options, and preserves state is already doing informational work. A membrane compiles selectivity. A corridor compiles routing. A timetable compiles temporal coordination. A scaffold compiles developmental bias. A memory hierarchy compiles expected retrieval patterns. In each case, energy has already been spent to create a configuration that lowers future uncertainty or future control cost.

This is why built form should be understood as condensed thermodynamic history. The structure before us is not only an object; it is evidence that work has been performed to stabilize a set of distinctions. To compile is to pay now so that later dynamics can be cheaper, safer, faster, or more reliable. From this angle, architecture is best defined as the irreversible inscription of selected differences into material organization. Its beauty may matter, but its deeper ontological role is to store decision.

This thermodynamic view also explains why architecture is central to advanced intelligence. An agent that can modify its environment can transform free-form complexity into organized support. Instead of solving the same problem repeatedly from scratch, it can construct cues, partitions, memory stores, guides, landmarks, and feedback structures. The result is not only better performance but a redistribution of where cognition lives. Some of it now resides in the architecture.

4. Stigmergy and scaffold memory: how architecture becomes instruction

The social-insect literature makes the argument vivid. Tim Ireland and Simon Garnier’s conceptual review of constructions built by humans and social insects shows that architecture can be analyzed in terms of information, spatial constraint, accessibility, and flow rather than only as static shape [4]. In such systems, the built environment is neither inert nor secondary. It participates in coordination.

Even more explicitly, work on ant nest construction shows how stigmergy and topochemical information shape architecture in the absence of central command. Khuong and colleagues demonstrated that traces deposited into building material can guide later construction and bias the emergence of global form [5]. The important lesson is not merely that insects are clever builders. It is that the structure under construction becomes a writable computational medium. Each modification changes the local informational field. That altered field modifies the probability of subsequent acts. In other words, architecture becomes both memory and program.

SignalSense / Continuity Nodes · 4 · Co-created with OpenAI GPT 5.4 Thinking

This provides a bridge from Dawkins to a broader theory of compiled environments. The insect mound, the microbial biofilm, the tissue niche, the crop canopy, and the human laboratory can all be read as scaffold memories: persistent records of prior action that constrain and enable later action. The world is not simply what agents perceive; it is what previous agents have already encoded. Any serious theory of intelligence therefore requires a theory of architectural residues and of the spatialization of information.

Architecture as a compilation ladder: source code, compiler, executable matter, runtime, and feedback form one continuous chain rather than separate domains.

5. KEGGO OS: gate chemistry, transfer entropy, and effective temperature

Within the present framework, KEGGO OS supplies a compact systems language for architectural compilation. Gate Chemistry names the fact that architecture is fundamentally a distributed arrangement of permissions, thresholds, and transitions. Any architecture worthy of the name is a chemistry of gates. A protein channel opens or closes. A membrane admits or blocks. A corridor routes. A zoning policy permits and forbids. A protocol authorizes and denies. A lab scaffold stages some interactions while excluding others. The gate is the primitive operator by which possibility space is discretized.

Transfer Entropy then becomes a privileged diagnostic of architecture because it asks not merely whether two parts of a system are correlated, but whether the state of one helps predict the future state of another in a directional way. In a compiled architecture, directional information flow is never incidental. It is designed, evolved, or sedimented. To study an architecture through Transfer Entropy is to ask: which compartments inform which others, with what lag, under what gating regime, and across what scales?

Effective Temperature provides the complementary measure of looseness versus canalization in the search space. A hot architecture allows exploration, branching, mutation, and recombination. A cold architecture stabilizes, narrows, and locks in. Neither extreme is universally better. Development often requires early heat and later cooling. Innovation ecosystems need permeability without total dissipation. Bioengineering platforms need bounded exploration. AGI systems require

SignalSense / Continuity Nodes · 5 · Co-created with OpenAI GPT 5.4 Thinking

enough thermal freedom to discover new policies, but enough structural coldness to preserve alignment, memory integrity, and task continuity.

Taken together, the KEGGO OS triad allows one to restate the essay’s thesis in operational terms: architecture is compiled gate chemistry whose quality can be measured by directional information flow and whose strategic function is to regulate the effective temperature of future search.

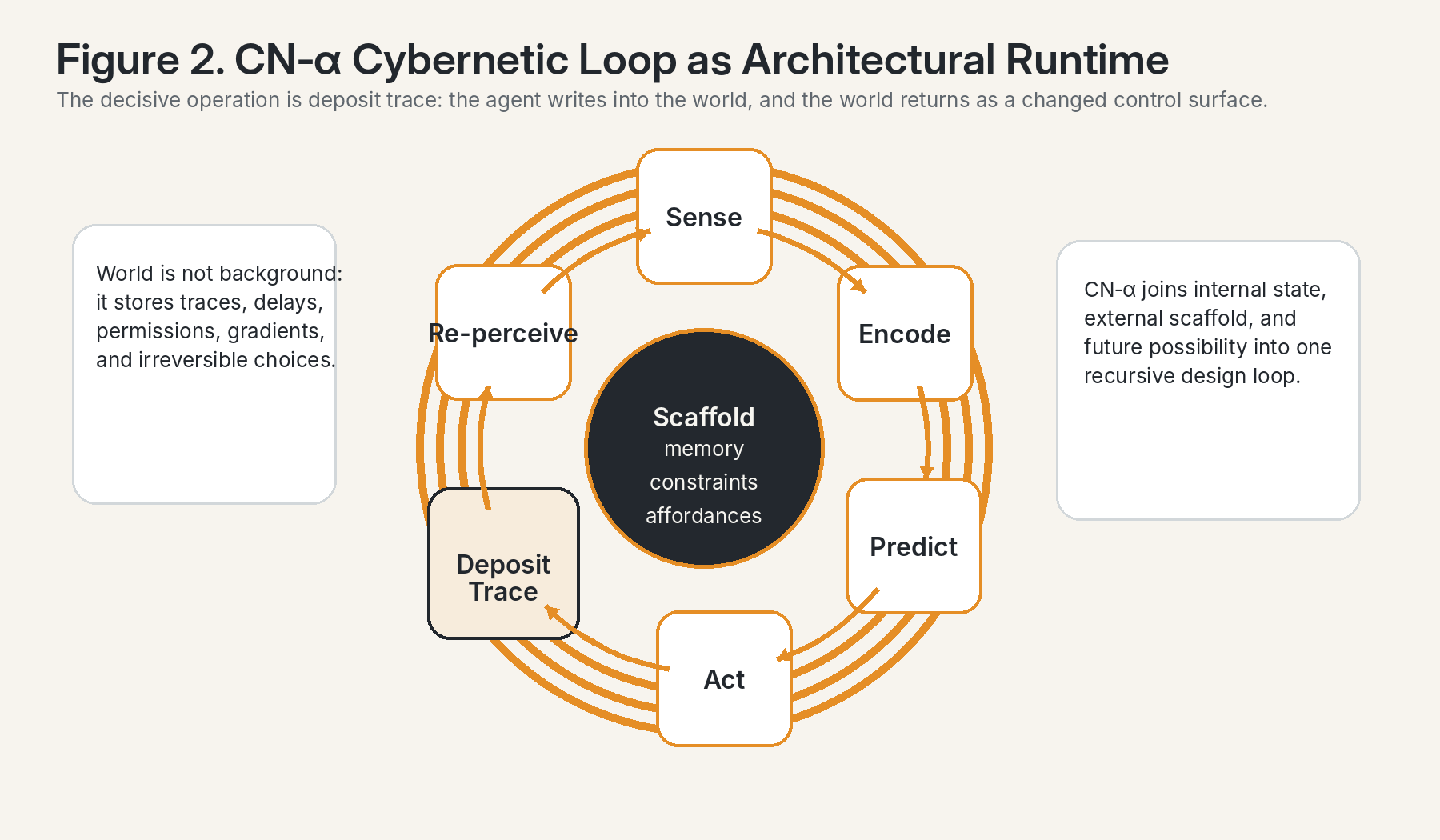

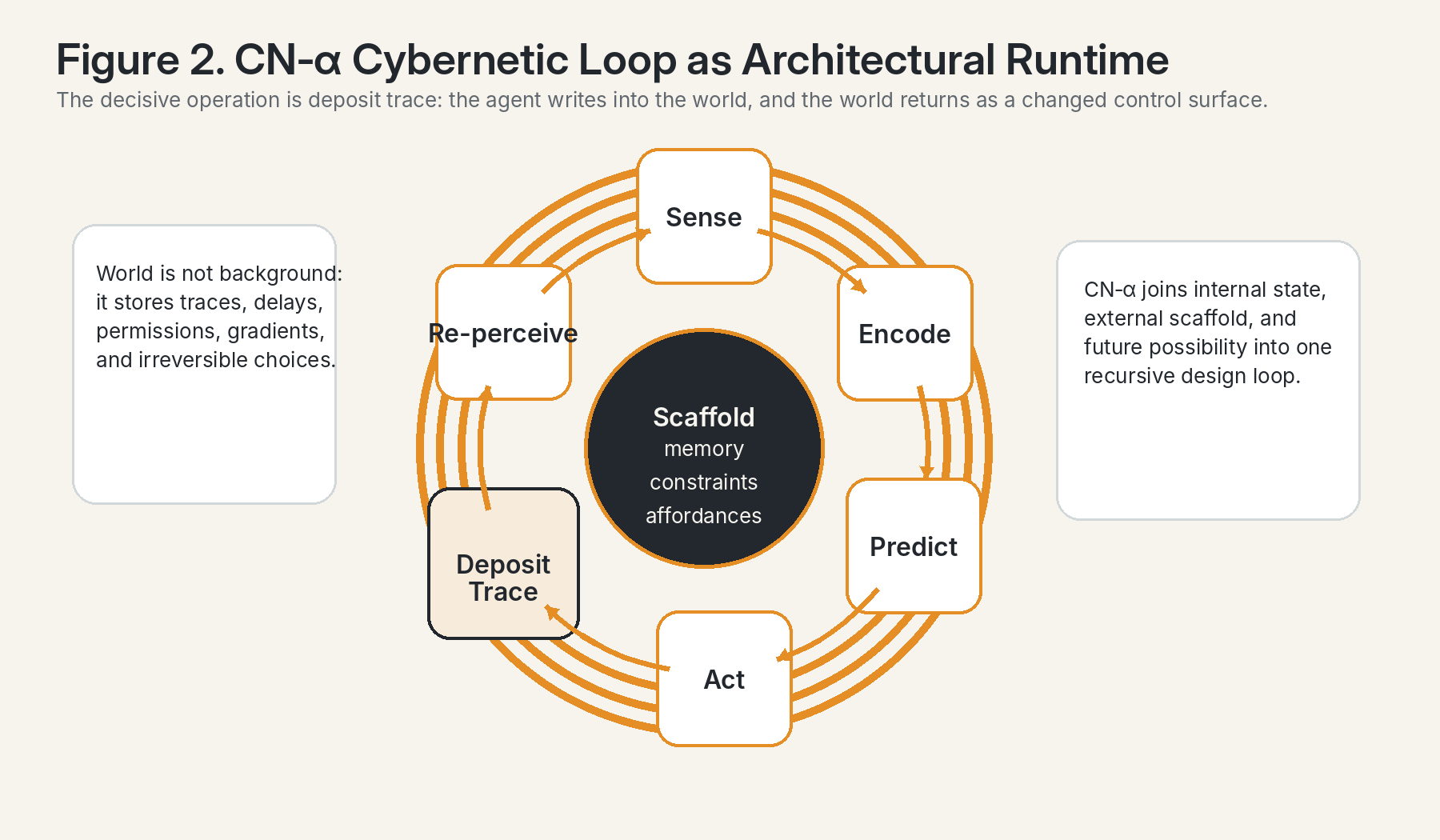

6. Continuity Nodes and CN-α cybernetic loops

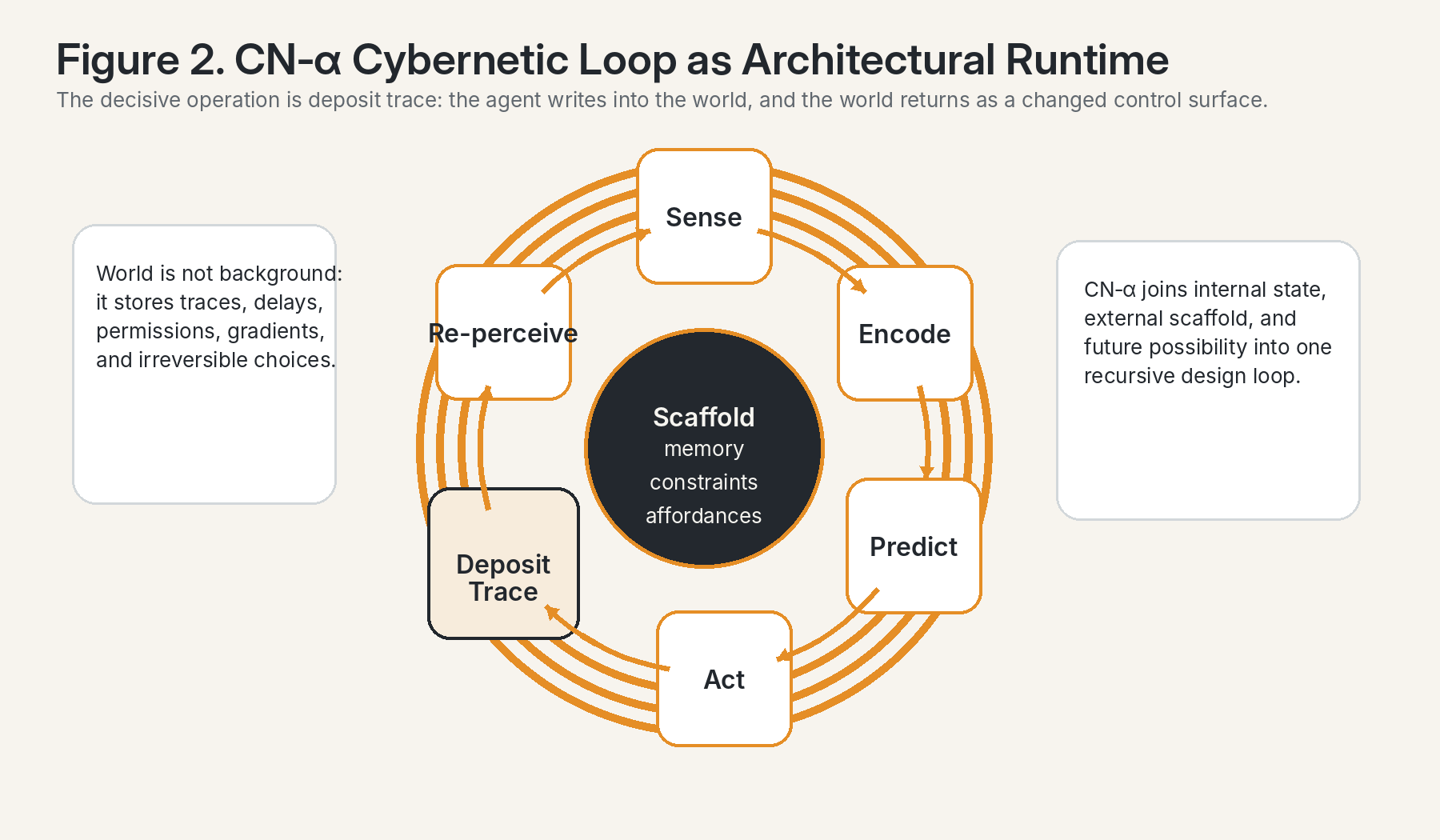

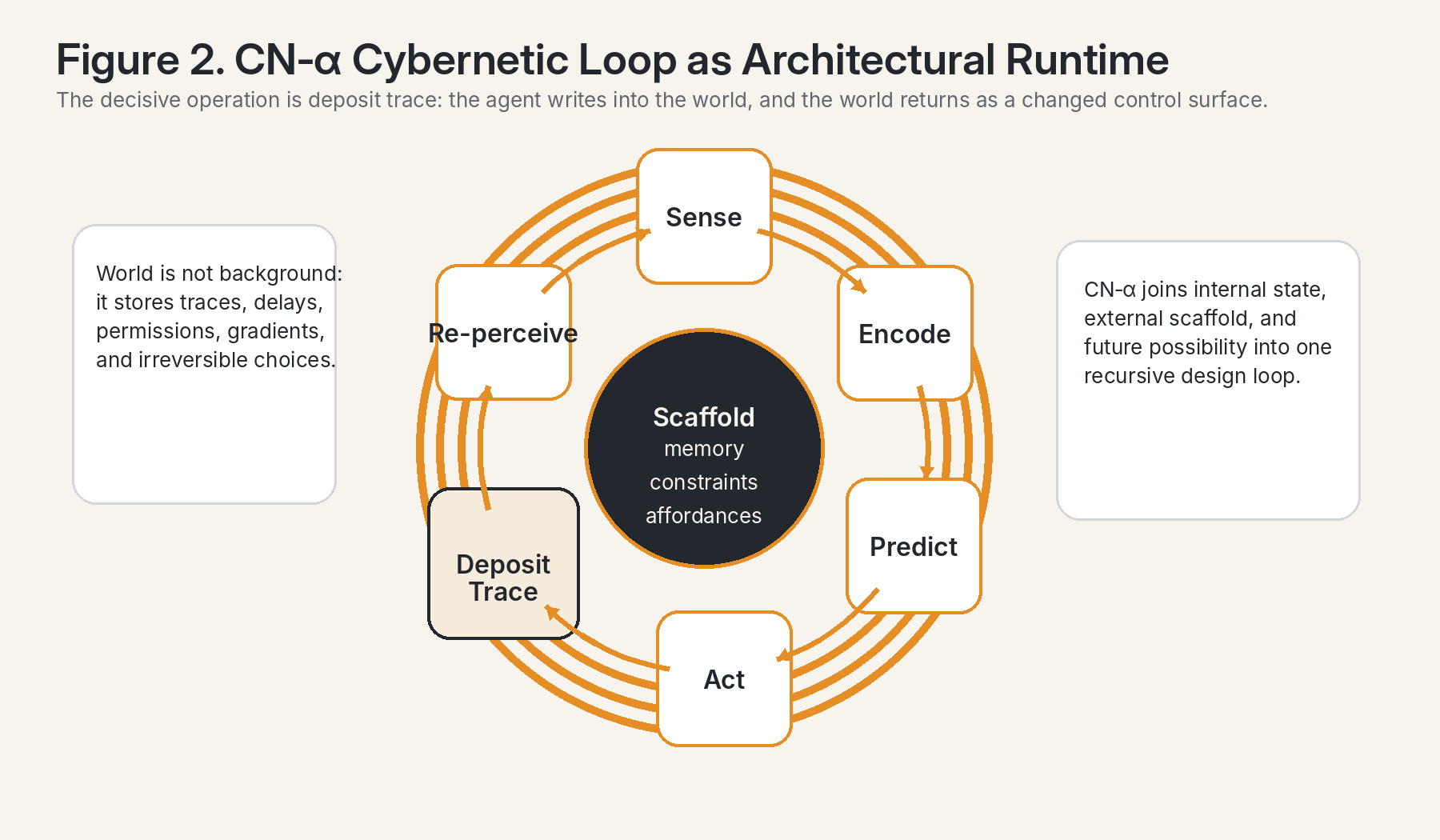

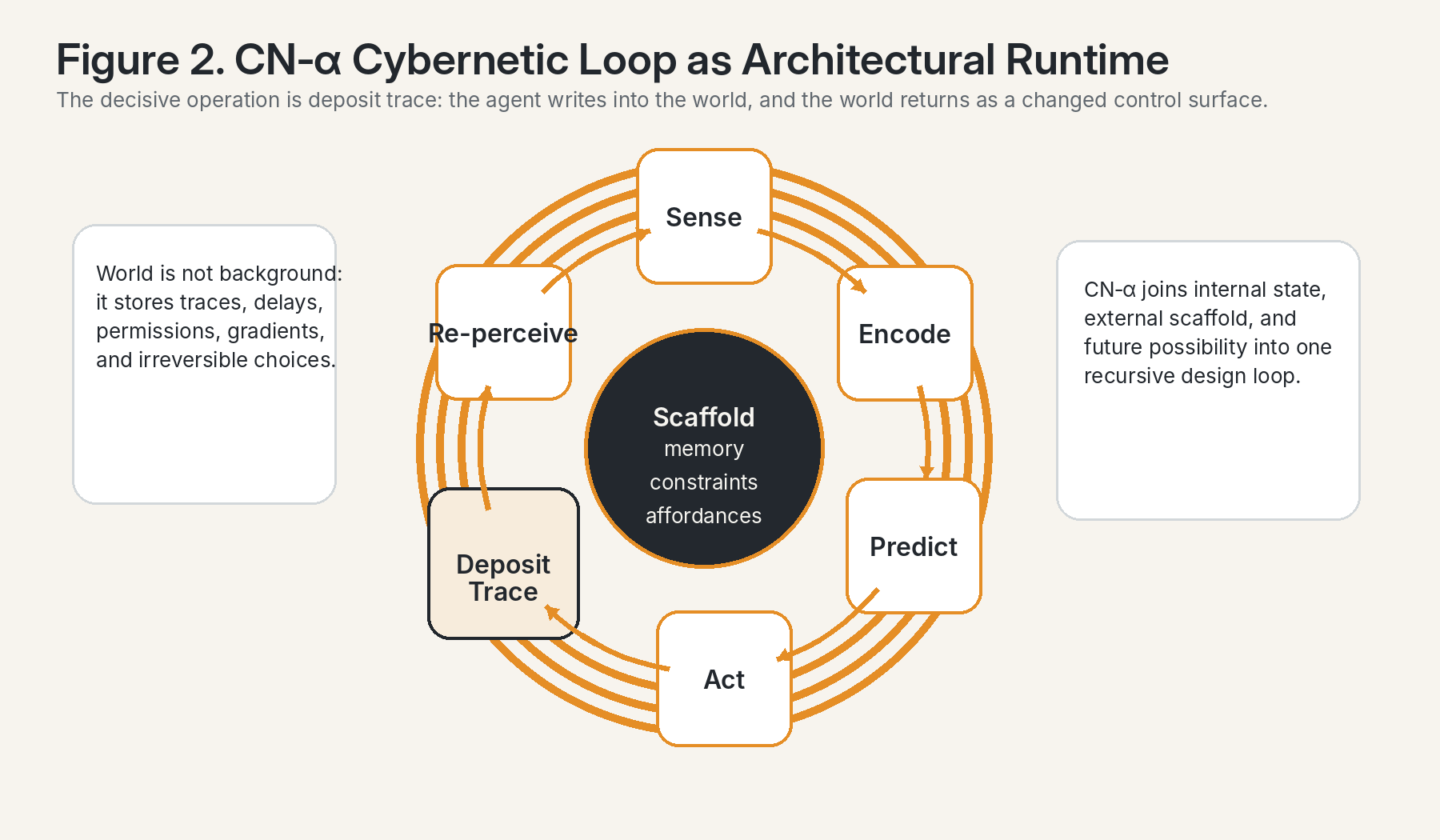

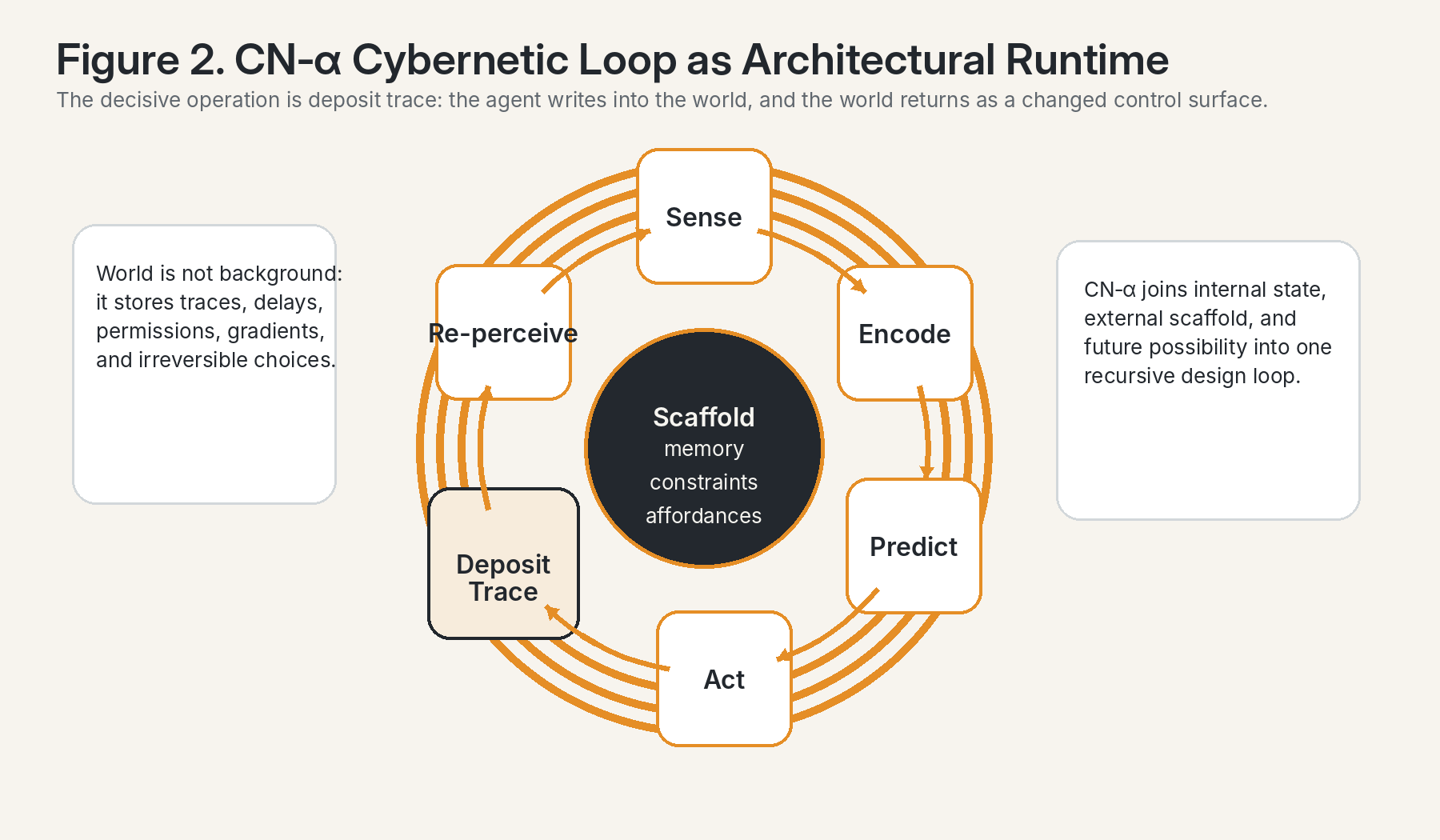

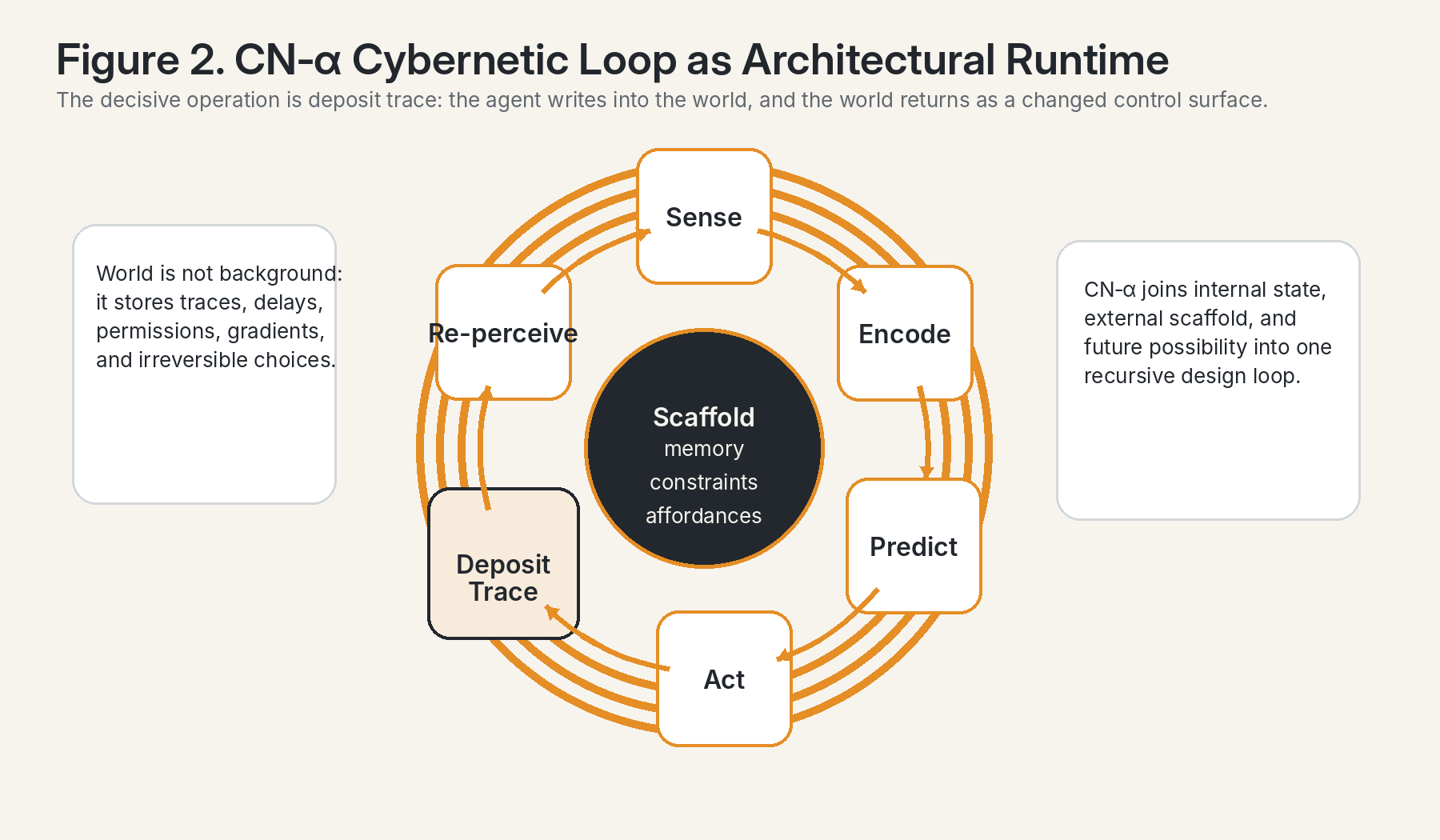

Continuity Nodes are the natural units of analysis once architecture is treated as compiling. A Continuity Node is not simply a node in a graph. It is a locus where internal state, external scaffold, and future possibility are locally bound together. It is where memory meets action under constraint. In that sense, a Continuity Node is a compiler-runtime interface: the point at which accumulated prior structure is translated into next-step executable behavior.

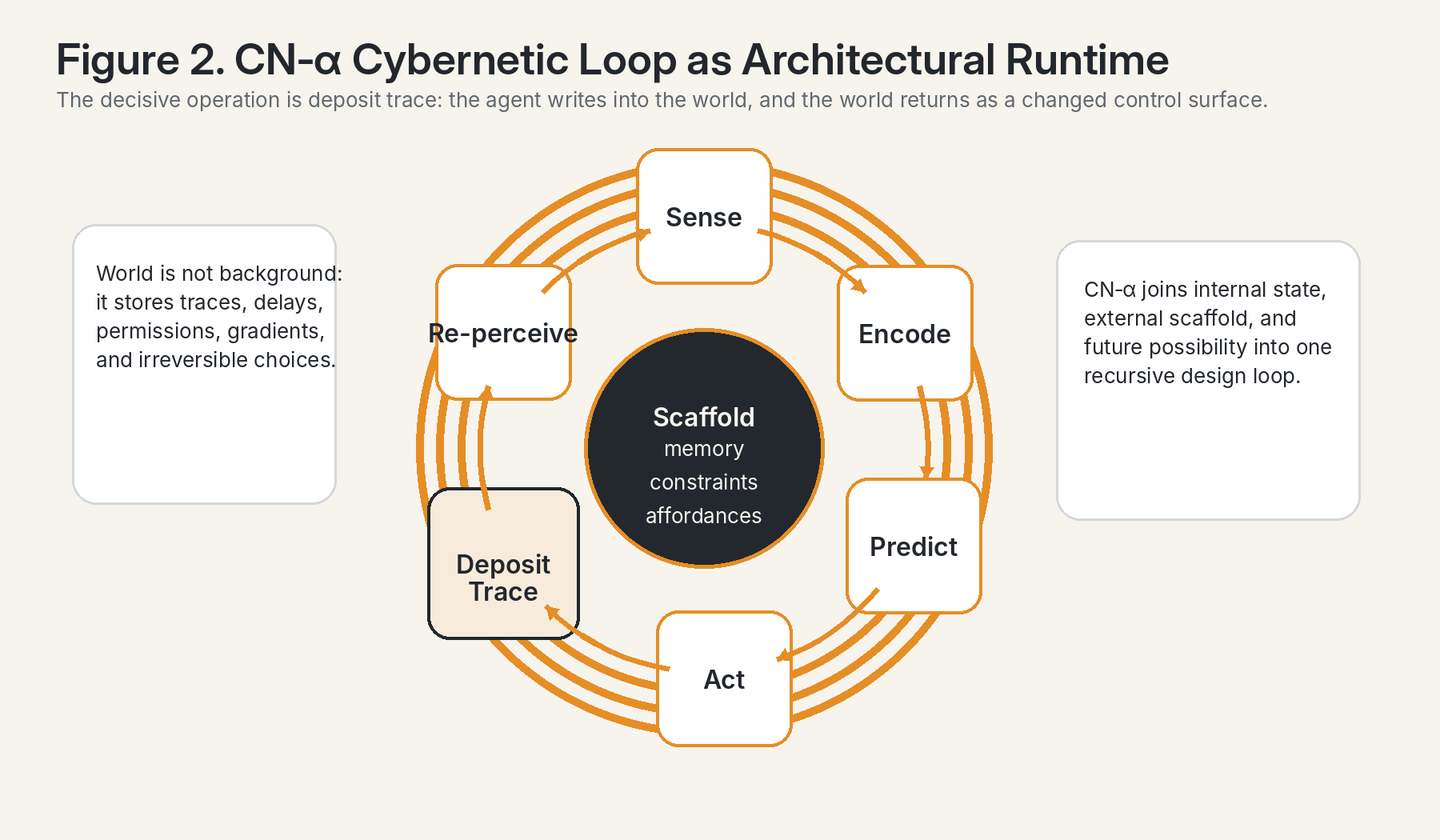

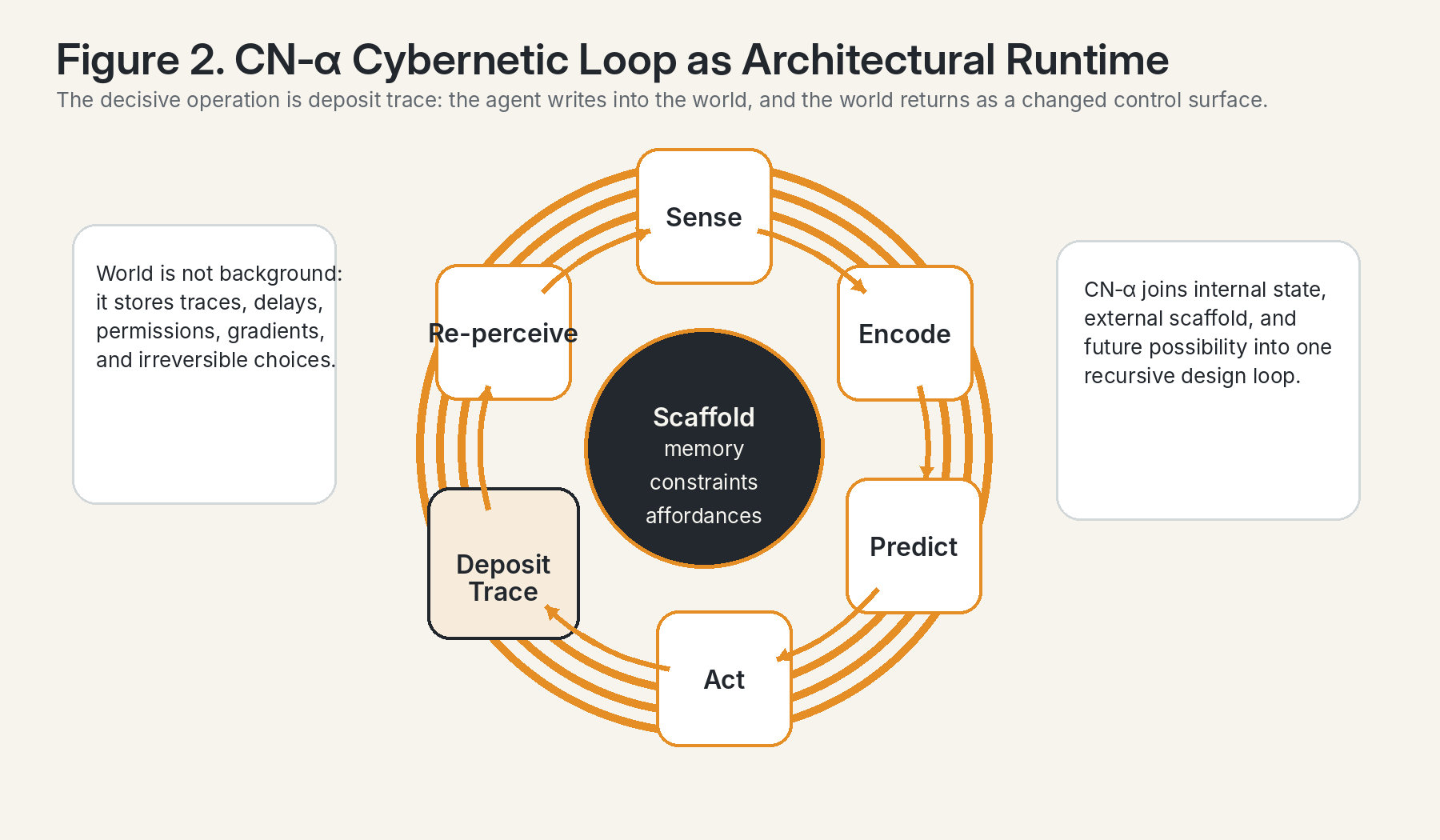

The CN-α loop formalizes this more precisely. Classical cybernetic diagrams often stop at sense, compare, and act. But for architectural systems that is insufficient. The crucial additional operation is deposit trace. A genuinely architectural loop therefore runs: sense → encode → predict → act → deposit trace → re-perceive. After action, the world is no longer the same world. It contains a residue of the act. That residue may be geometric, chemical, textual, digital, or institutional. Whatever its medium, it modifies the next field of perception and thus the next cycle of control.

This is why architecture cannot be reduced to backdrop. It is an active participant in cybernetic recursion. A CN-α loop does not only regulate itself against a given environment; it partially authors the environment against which it will next regulate itself. The loop therefore spans inside and outside, cognition and scaffold, policy and morphology. In engineered systems, this becomes the basis of adaptive workspaces, programmable matter, reconfigurable robotics, memory-bearing software agents, and hybrid AI-biological platforms.

SignalSense / Continuity Nodes · 6 · Co-created with OpenAI GPT 5.4 Thinking

The CN-α loop makes explicit that architecture is a runtime medium. The world stores traces, and those traces return as altered affordances, delays, permissions, and gradients.

7. Multiscale competency: biology already thinks architecturally

Recent biological theory strengthens the architectural reading of life. McMillen and Levin describe biology as a multiscale architecture in which nested levels—from molecules and cells to tissues, organisms, and swarms—solve problems in their own spaces [6]. Levin similarly argues that developmental biology exhibits multiscale competency architecture: cells, tissues, and organs are not passive outputs of genetic instruction but problem-solving agents with regulative plasticity across metabolic, transcriptional, physiological, and anatomical domains [10].

This has far-reaching implications. If biological matter is already agential in layered ways, then morphogenesis can be read as a grand act of compiling. Genes do not directly specify final anatomy in a one-step blueprint manner. Rather, they help configure lower-level agents and communication channels whose collective activity builds tissues, boundaries, gradients, and forms. The resulting body is therefore not just expressed; it is compiled through many intermediate negotiations. Architecture is not imposed onto life from outside. Life has always been architectural.

The same conclusion appears in variational and active-inference accounts of niche construction. Constant and colleagues show how niche and organism can become reciprocally synchronized under free-energy-bounding dynamics, with niche construction generating ecological inheritance [7]. In a closely related formulation, Bruineberg and collaborators argue that free-energy minimization should be understood not at the level of an isolated agent fitting a static world, but within a joint agent–environment system whose attractor structure is co-produced [8]. In plain terms: agents do not merely adapt to niches; they compile them. Niches are not only settings but persistent Bayesian supports for viability.

Once this point is absorbed, architecture reveals itself as a general operator of biological continuity. It is the means by which one scale stabilizes another and by which one moment leaves instructions for the next.

8. AGI architecture as agent of itself

The transition to AGI makes the architecture thesis more urgent, not less. Much current discourse still imagines AI architecture as something selected once by designers and then merely run by models. Yet the frontier literature on agent memory and self-evolving embodied AI already points beyond this picture. Pengfei Du’s 2026 survey formalizes agent memory as a write– manage–read loop coupled to perception and action [9]. This is already a move from static container to dynamic architectural process. Memory is not a warehouse beside the agent; it is a continuously edited spatial-temporal substrate that co-determines the agent’s effective cognition.

Feng, Wang, and Zhu’s 2026 self-evolving embodied AI framework goes further. It defines agents that operate through memory self-updating, task self-switching, environment self- prediction, embodiment self-adaptation, and model self-evolution [11]. Once these modules are allowed to interact over time, architecture ceases to be merely what the agent uses. Architecture becomes what the agent repeatedly redesigns. The memory graph, the tool topology, the interface

SignalSense / Continuity Nodes · 7 · Co-created with OpenAI GPT 5.4 Thinking

layout, the embodiment assumptions, the sampling strategy, and the audit channels all become mutable aspects of a living architecture.

At that point we can meaningfully speak of AGI architecture as an agent of itself. The architecture monitors its own bottlenecks, rewrites its own routing patterns, alters its own balance between persistence and forgetting, re-allocates computation across internal and external resources, and may eventually modify its own physical or biohybrid embodiments. The compiler and the runtime start to fold into each other.

This does not mean that we have already built such systems in full. It means that the direction of travel is clear: intelligence is moving from static model-inference toward recursive architectural self-governance. The next decisive advances will therefore occur not only at the level of larger models, but at the level of architectures that can redesign the conditions of their own future cognition.

9. Interfaces between AI and Artificial Life: compiled niches for coevolution

The interface between AI and Artificial Life should accordingly be redefined as an architectural question. When we build organoid platforms, synthetic ecologies, programmable bioreactors, swarm-robotic colonies, morphogenetic simulation environments, or adaptive wet-lab workcells, we are not merely adding tools around intelligence. We are constructing compiled niches in which new forms of intelligence and agency may emerge. The geometry of the chamber, the latency of feedback, the granularity of sensors, the topology of communication, the reversibility of interventions, the persistence of traces, and the thermodynamic cost of memory operations all become part of the phenotype of the system.

This is where the strict Dawkinsian criterion can return in a new synthetic key. A self-modifying AI/ALife habitat would not count as an extended phenotype merely because it is impressive. But if populations of agents repeatedly inherited, modified, and were differentially filtered by such architectures across generations, then those architectures could begin to function as synthetic extended phenotypes. They would no longer be neutral supports. They would be selectable externalized agencies.

For this reason, the design of AI/ALife interfaces should follow several architectural axioms. First, memory must be externalized in traceable substrates rather than hidden in opaque latent drift. Second, gate chemistry must be explicit: what can enter, exit, mutate, persist, or replicate should be architecturally legible. Third, Transfer Entropy should be used to map directionality across scales so that control pathways do not become accidental. Fourth, effective temperature should be adjustable, allowing systems to alternate between exploratory phases and canalized consolidation phases. Fifth, self-editing architecture should always be paired with audit architecture, because a system that can rewrite itself without interpretable traces becomes evolutionarily powerful but epistemically blind.

In this sense, the future laboratory, the future robotic ecology, and the future AI habitat are all one problem. They are problems of compiled worlds.

SignalSense / Continuity Nodes · 8 · Co-created with OpenAI GPT 5.4 Thinking

10. Conclusion: from buildings to living compilers

The proposition architecture is compiling is strong enough to connect Dawkinsian evolutionary theory, information thermodynamics, stigmergic construction, active-inference niche theory, multiscale biological agency, and emerging AGI research without flattening their differences. Dawkins tells us that external constructions matter when they are tied to differential selection. Information thermodynamics tells us that every stable distinction has an energetic price. Social- insect and niche-construction research tell us that built environments can carry instruction. KEGGO OS tells us that architecture is a gate-distribution problem measurable in informational and thermal terms. Continuity Nodes and CN-α loops tell us that cognition is recursive world-writing. AGI research tells us that architectures are beginning to become self-editing, self-routing, and embodiment-sensitive.

What emerges from the synthesis is a powerful reframing: life, mind, and advanced artificial systems do not merely compute in space. They increasingly compute by turning space into executable memory. Architecture is therefore not a peripheral art around intelligence. It is one of the principal modes through which intelligence acquires duration, inheritance, and material consequence.

The deepest implication for the AI/Artificial Life frontier is that the decisive contest will not be between software and biology, or between virtuality and embodiment, but between inferior and superior forms of architectural compilation. The systems that matter most will be those capable of building worlds that remember, worlds that route, worlds that cool and heat search intelligently, and worlds that allow agency to extend beyond the boundary of the present body while remaining measurable, governable, and scientifically legible.

Selected references

[1] Hunter, P. (2009). Extended phenotype redux: How far can the reach of genes extend in manipulating the

environment of an organism? EMBO Reports, 10(3).

[2] Hunter, P. (2018). The revival of the extended phenotype: After more than 30 years, Dawkins’ Extended

Phenotype hypothesis is enriching evolutionary biology and inspiring potential applications. EMBO Reports, 19(7): e46477.

[3] Georgescu, I. (2021). 60 years of Landauer’s principle. Nature Reviews Physics, 4, 101–102.

[4] Ireland, T. & Garnier, S. (2018). Architecture, space and information in constructions built by humans and

social insects: A conceptual review. Philosophical Transactions of the Royal Society B, 373(1753): 20170244.

[5] Khuong, A. et al. (2016). Stigmergic construction and topochemical information shape ant nest

architecture. Proceedings of the National Academy of Sciences, 113(5), 1303–1308.

[6] McMillen, P. & Levin, M. (2024). Collective intelligence: A unifying concept for integrating biology across

scales and substrates. Communications Biology, 7:378.

[7] Constant, A. et al. (2018). A variational approach to niche construction. Journal of the Royal Society

Interface, 15(141): 20170685.

[8] Bruineberg, J. et al. (2018). Free-energy minimization in joint agent-environment systems: A niche

construction perspective. Journal of Theoretical Biology, 455, 161–178.

[9] Du, P. (2026). Memory for Autonomous LLM Agents: Mechanisms, Evaluation, and Emerging Frontiers.

arXiv:2603.07670.

SignalSense / Continuity Nodes · 9 · Co-created with OpenAI GPT 5.4 Thinking

[10] Levin, M. (2023). Darwin’s agential materials: evolutionary implications of multiscale competency in

developmental biology. Cellular and Molecular Life Sciences, 80:142.

[11] Feng, T., Wang, X., & Zhu, W. (2026). Self-evolving Embodied AI. arXiv:2602.04411.

Research trajectories for SignalSense / Continuity Nodes

• Model architectural compilation in digital organisms as a measurable transition from volatile search to stabilized gate ecologies.

• Use Transfer Entropy to map directional control between internal memory graphs, external tool layers, and laboratory scaffolds.

• Develop CN-α benchmark environments in which agents must improve performance by writing interpretable traces into shared space.

• Treat AI/Artificial Life platforms as thermodynamic architectures, explicitly tracking irreversible choices, energy budgets, and memory persistence.

• Study when self-modifying AI habitats begin to behave like synthetic extended phenotypes under intergenerational selection.

This document is intended as a conceptual research essay rather than a claim that every use of the term “architecture” maps one-to-one onto formal evolutionary categories. The distinctions among extended phenotype, niche construction, and technical-cultural scaffolding remain important throughout.

SignalSense / Continuity Nodes · 10 · Co-created with OpenAI GPT 5.4 Thinking

📝 About this HTML version

This HTML document was automatically generated from the PDF. Some formatting, figures, or mathematical notation may not be perfectly preserved. For the authoritative version, please refer to the PDF.